下载Hugging face 预训练模型报错:ProxyError

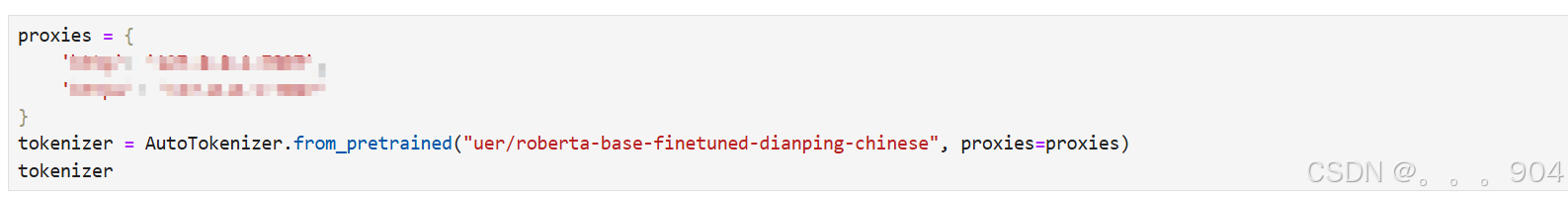

网上找了很多方法都好像不起作用,没法,只能每次手动设置添加proxies。

·

报错详情:

--------------------------------------------------------------------------- SSLError Traceback (most recent call last) SSLError: TLS/SSL connection has been closed (EOF) (_ssl.c:1149) The above exception was the direct cause of the following exception: ProxyError Traceback (most recent call last) ProxyError: ('Unable to connect to proxy', SSLError(SSLZeroReturnError(6, 'TLS/SSL connection has been closed (EOF) (_ssl.c:1149)'))) The above exception was the direct cause of the following exception: MaxRetryError Traceback (most recent call last) File C:\Tools\Anaconda\envs\transformers\lib\site-packages\requests\adapters.py:667, in HTTPAdapter.send(self, request, stream, timeout, verify, cert, proxies) 666 try: --> 667 resp = conn.urlopen( 668 method=request.method, 669 url=url, 670 body=request.body, 671 headers=request.headers, 672 redirect=False, 673 assert_same_host=False, 674 preload_content=False, 675 decode_content=False, 676 retries=self.max_retries, 677 timeout=timeout, 678 chunked=chunked, 679 ) 681 except (ProtocolError, OSError) as err: File C:\Tools\Anaconda\envs\transformers\lib\site-packages\urllib3\connectionpool.py:843, in HTTPConnectionPool.urlopen(self, method, url, body, headers, retries, redirect, assert_same_host, timeout, pool_timeout, release_conn, chunked, body_pos, preload_content, decode_content, **response_kw) 841 new_e = ProtocolError("Connection aborted.", new_e) --> 843 retries = retries.increment( 844 method, url, error=new_e, _pool=self, _stacktrace=sys.exc_info()[2] 845 ) 846 retries.sleep() File C:\Tools\Anaconda\envs\transformers\lib\site-packages\urllib3\util\retry.py:519, in Retry.increment(self, method, url, response, error, _pool, _stacktrace) 518 reason = error or ResponseError(cause) --> 519 raise MaxRetryError(_pool, url, reason) from reason # type: ignore[arg-type] 521 log.debug("Incremented Retry for (url='%s'): %r", url, new_retry) MaxRetryError: HTTPSConnectionPool(host='huggingface.co', port=443): Max retries exceeded with url: /uer/roberta-base-finetuned-dianping-chinese/resolve/main/tokenizer_config.json (Caused by ProxyError('Unable to connect to proxy', SSLError(SSLZeroReturnError(6, 'TLS/SSL connection has been closed (EOF) (_ssl.c:1149)')))) During handling of the above exception, another exception occurred: ProxyError Traceback (most recent call last) Cell In[23], line 1 ----> 1 tokenizer = AutoTokenizer.from_pretrained("uer/roberta-base-finetuned-dianping-chinese") 2 tokenizer File C:\Tools\Anaconda\envs\transformers\lib\site-packages\transformers\models\auto\tokenization_auto.py:857, in AutoTokenizer.from_pretrained(cls, pretrained_model_name_or_path, *inputs, **kwargs) 854 return tokenizer_class.from_pretrained(pretrained_model_name_or_path, *inputs, **kwargs) 856 # Next, let's try to use the tokenizer_config file to get the tokenizer class. --> 857 tokenizer_config = get_tokenizer_config(pretrained_model_name_or_path, **kwargs) 858 if "_commit_hash" in tokenizer_config: 859 kwargs["_commit_hash"] = tokenizer_config["_commit_hash"] File C:\Tools\Anaconda\envs\transformers\lib\site-packages\transformers\models\auto\tokenization_auto.py:689, in get_tokenizer_config(pretrained_model_name_or_path, cache_dir, force_download, resume_download, proxies, token, revision, local_files_only, subfolder, **kwargs) 686 token = use_auth_token 688 commit_hash = kwargs.get("_commit_hash", None) --> 689 resolved_config_file = cached_file( 690 pretrained_model_name_or_path, 691 TOKENIZER_CONFIG_FILE, 692 cache_dir=cache_dir, 693 force_download=force_download, 694 resume_download=resume_download, 695 proxies=proxies, 696 token=token, 697 revision=revision, 698 local_files_only=local_files_only, 699 subfolder=subfolder, 700 _raise_exceptions_for_gated_repo=False, 701 _raise_exceptions_for_missing_entries=False, 702 _raise_exceptions_for_connection_errors=False, 703 _commit_hash=commit_hash, 704 ) 705 if resolved_config_file is None: 706 logger.info("Could not locate the tokenizer configuration file, will try to use the model config instead.") File C:\Tools\Anaconda\envs\transformers\lib\site-packages\transformers\utils\hub.py:403, in cached_file(path_or_repo_id, filename, cache_dir, force_download, resume_download, proxies, token, revision, local_files_only, subfolder, repo_type, user_agent, _raise_exceptions_for_gated_repo, _raise_exceptions_for_missing_entries, _raise_exceptions_for_connection_errors, _commit_hash, **deprecated_kwargs) 400 user_agent = http_user_agent(user_agent) 401 try: 402 # Load from URL or cache if already cached --> 403 resolved_file = hf_hub_download( 404 path_or_repo_id, 405 filename, 406 subfolder=None if len(subfolder) == 0 else subfolder, 407 repo_type=repo_type, 408 revision=revision, 409 cache_dir=cache_dir, 410 user_agent=user_agent, 411 force_download=force_download, 412 proxies=proxies, 413 resume_download=resume_download, 414 token=token, 415 local_files_only=local_files_only, 416 ) 417 except GatedRepoError as e: 418 resolved_file = _get_cache_file_to_return(path_or_repo_id, full_filename, cache_dir, revision) File C:\Tools\Anaconda\envs\transformers\lib\site-packages\huggingface_hub\utils\_validators.py:114, in validate_hf_hub_args.<locals>._inner_fn(*args, **kwargs) 111 if check_use_auth_token: 112 kwargs = smoothly_deprecate_use_auth_token(fn_name=fn.__name__, has_token=has_token, kwargs=kwargs) --> 114 return fn(*args, **kwargs) File C:\Tools\Anaconda\envs\transformers\lib\site-packages\huggingface_hub\file_download.py:860, in hf_hub_download(repo_id, filename, subfolder, repo_type, revision, library_name, library_version, cache_dir, local_dir, user_agent, force_download, proxies, etag_timeout, token, local_files_only, headers, endpoint, resume_download, force_filename, local_dir_use_symlinks) 840 return _hf_hub_download_to_local_dir( 841 # Destination 842 local_dir=local_dir, (...) 857 local_files_only=local_files_only, 858 ) 859 else: --> 860 return _hf_hub_download_to_cache_dir( 861 # Destination 862 cache_dir=cache_dir, 863 # File info 864 repo_id=repo_id, 865 filename=filename, 866 repo_type=repo_type, 867 revision=revision, 868 # HTTP info 869 endpoint=endpoint, 870 etag_timeout=etag_timeout, 871 headers=hf_headers, 872 proxies=proxies, 873 token=token, 874 # Additional options 875 local_files_only=local_files_only, 876 force_download=force_download, 877 ) File C:\Tools\Anaconda\envs\transformers\lib\site-packages\huggingface_hub\file_download.py:923, in _hf_hub_download_to_cache_dir(cache_dir, repo_id, filename, repo_type, revision, endpoint, etag_timeout, headers, proxies, token, local_files_only, force_download) 919 return pointer_path 921 # Try to get metadata (etag, commit_hash, url, size) from the server. 922 # If we can't, a HEAD request error is returned. --> 923 (url_to_download, etag, commit_hash, expected_size, head_call_error) = _get_metadata_or_catch_error( 924 repo_id=repo_id, 925 filename=filename, 926 repo_type=repo_type, 927 revision=revision, 928 endpoint=endpoint, 929 proxies=proxies, 930 etag_timeout=etag_timeout, 931 headers=headers, 932 token=token, 933 local_files_only=local_files_only, 934 storage_folder=storage_folder, 935 relative_filename=relative_filename, 936 ) 938 # etag can be None for several reasons: 939 # 1. we passed local_files_only. 940 # 2. we don't have a connection (...) 946 # If the specified revision is a commit hash, look inside "snapshots". 947 # If the specified revision is a branch or tag, look inside "refs". 948 if head_call_error is not None: 949 # Couldn't make a HEAD call => let's try to find a local file File C:\Tools\Anaconda\envs\transformers\lib\site-packages\huggingface_hub\file_download.py:1374, in _get_metadata_or_catch_error(repo_id, filename, repo_type, revision, endpoint, proxies, etag_timeout, headers, token, local_files_only, relative_filename, storage_folder) 1372 try: 1373 try: -> 1374 metadata = get_hf_file_metadata( 1375 url=url, proxies=proxies, timeout=etag_timeout, headers=headers, token=token 1376 ) 1377 except EntryNotFoundError as http_error: 1378 if storage_folder is not None and relative_filename is not None: 1379 # Cache the non-existence of the file File C:\Tools\Anaconda\envs\transformers\lib\site-packages\huggingface_hub\utils\_validators.py:114, in validate_hf_hub_args.<locals>._inner_fn(*args, **kwargs) 111 if check_use_auth_token: 112 kwargs = smoothly_deprecate_use_auth_token(fn_name=fn.__name__, has_token=has_token, kwargs=kwargs) --> 114 return fn(*args, **kwargs) File C:\Tools\Anaconda\envs\transformers\lib\site-packages\huggingface_hub\file_download.py:1294, in get_hf_file_metadata(url, token, proxies, timeout, library_name, library_version, user_agent, headers) 1291 hf_headers["Accept-Encoding"] = "identity" # prevent any compression => we want to know the real size of the file 1293 # Retrieve metadata -> 1294 r = _request_wrapper( 1295 method="HEAD", 1296 url=url, 1297 headers=hf_headers, 1298 allow_redirects=False, 1299 follow_relative_redirects=True, 1300 proxies=proxies, 1301 timeout=timeout, 1302 ) 1303 hf_raise_for_status(r) 1305 # Return File C:\Tools\Anaconda\envs\transformers\lib\site-packages\huggingface_hub\file_download.py:278, in _request_wrapper(method, url, follow_relative_redirects, **params) 276 # Recursively follow relative redirects 277 if follow_relative_redirects: --> 278 response = _request_wrapper( 279 method=method, 280 url=url, 281 follow_relative_redirects=False, 282 **params, 283 ) 285 # If redirection, we redirect only relative paths. 286 # This is useful in case of a renamed repository. 287 if 300 <= response.status_code <= 399: File C:\Tools\Anaconda\envs\transformers\lib\site-packages\huggingface_hub\file_download.py:301, in _request_wrapper(method, url, follow_relative_redirects, **params) 298 return response 300 # Perform request and return if status_code is not in the retry list. --> 301 response = get_session().request(method=method, url=url, **params) 302 hf_raise_for_status(response) 303 return response File C:\Tools\Anaconda\envs\transformers\lib\site-packages\requests\sessions.py:589, in Session.request(self, method, url, params, data, headers, cookies, files, auth, timeout, allow_redirects, proxies, hooks, stream, verify, cert, json) 584 send_kwargs = { 585 "timeout": timeout, 586 "allow_redirects": allow_redirects, 587 } 588 send_kwargs.update(settings) --> 589 resp = self.send(prep, **send_kwargs) 591 return resp File C:\Tools\Anaconda\envs\transformers\lib\site-packages\requests\sessions.py:703, in Session.send(self, request, **kwargs) 700 start = preferred_clock() 702 # Send the request --> 703 r = adapter.send(request, **kwargs) 705 # Total elapsed time of the request (approximately) 706 elapsed = preferred_clock() - start File C:\Tools\Anaconda\envs\transformers\lib\site-packages\huggingface_hub\utils\_http.py:93, in UniqueRequestIdAdapter.send(self, request, *args, **kwargs) 91 """Catch any RequestException to append request id to the error message for debugging.""" 92 try: ---> 93 return super().send(request, *args, **kwargs) 94 except requests.RequestException as e: 95 request_id = request.headers.get(X_AMZN_TRACE_ID) File C:\Tools\Anaconda\envs\transformers\lib\site-packages\requests\adapters.py:694, in HTTPAdapter.send(self, request, stream, timeout, verify, cert, proxies) 691 raise RetryError(e, request=request) 693 if isinstance(e.reason, _ProxyError): --> 694 raise ProxyError(e, request=request) 696 if isinstance(e.reason, _SSLError): 697 # This branch is for urllib3 v1.22 and later. 698 raise SSLError(e, request=request) ProxyError: (MaxRetryError("HTTPSConnectionPool(host='huggingface.co', port=443): Max retries exceeded with url: /uer/roberta-base-finetuned-dianping-chinese/resolve/main/tokenizer_config.json (Caused by ProxyError('Unable to connect to proxy', SSLError(SSLZeroReturnError(6, 'TLS/SSL connection has been closed (EOF) (_ssl.c:1149)'))))"), '(Request ID: 47340243-c81f-4880-a3fd-cc0f18ef79fc)')

请问有没有大佬有永久改Hugging face下载代理服务器的方式啊;

网上找了很多方法都好像不起作用,没法,只能每次手动设置添加proxies

更多推荐

已为社区贡献3条内容

已为社区贡献3条内容

所有评论(0)