Qwen3.5-9B 官方文档总结

Qwen3.5-9B模型技术摘要 Qwen3.5-9B是一个9B参数的因果语言模型,具备视觉编码器,支持多模态输入。模型架构采用32层Transformer,包含8组3层门控DeltaNet+FFN和1层门控注意力+FFN的混合结构。DeltaNet使用32/16头线性注意力,门控注意力采用16/4头配置,并应用64维旋转位置嵌入。模型原生支持262,144令牌上下文,可扩展至1,010,000令

模型概述

- Type: Causal Language Model with Vision Encoder

- Training Stage: Pre-training & Post-training

- Language Model

- Number of Parameters: 9B

- Hidden Dimension: 4096

- Token Embedding: 248320 (Padded)

- Number of Layers: 32

- Hidden Layout: 8 × (3 × (Gated DeltaNet → FFN) → 1 × (Gated Attention → FFN))

- Gated DeltaNet:

- Number of Linear Attention Heads: 32 for V and 16 for QK

- Head Dimension: 128

- Gated Attention:

- Number of Attention Heads: 16 for Q and 4 for KV

- Head Dimension: 256

- Rotary Position Embedding Dimension: 64

- Feed Forward Network:

- Intermediate Dimension: 12288

- LM Output: 248320 (Padded)

- MTP: trained with multi-steps

- Context Length: 262,144 natively and extensible up to 1,010,000 tokens.

vLLM

通过以下命令可以在本地创建 API 端点http://localhost:8000/v1:

-

标准版:可以使用以下命令在 8 个 GPU 上使用张量并行创建最大上下文长度为 262,144 个标记的 API 端点。

vllm serve Qwen/Qwen3.5-9B --port 8000 --tensor-parallel-size 1 --max-model-len 262144 --reasoning-parser qwen3 -

工具调用:为了支持工具的使用,您可以使用以下命令。

vllm serve Qwen/Qwen3.5-9B --port 8000 --tensor-parallel-size 1 --max-model-len 262144 --reasoning-parser qwen3 --enable-auto-tool-choice --tool-call-parser qwen3_coder -

多标记预测 (MTP):建议使用以下命令进行 MTP:

vllm serve Qwen/Qwen3.5-9B --port 8000 --tensor-parallel-size 1 --max-model-len 262144 --reasoning-parser qwen3 --speculative-config '{"method":"qwen3_next_mtp","num_speculative_tokens":2}' -

仅文本:以下命令跳过视觉编码器和多模态分析,以释放内存用于额外的 KV 缓存:

vllm serve Qwen/Qwen3.5-9B --port 8000 --tensor-parallel-size 1 --max-model-len 262144 --reasoning-parser qwen3 --language-model-only

Using Qwen3.5 via the Chat Completions API

Before starting, make sure it is installed and the API key and the API base URL is configured, e.g.:

pip install -U openai

Set the following accordingly

export OPENAI_BASE_URL="http://localhost:8000/v1"

export OPENAI_API_KEY="EMPTY"

采样参数配置表

| 模式 (Mode) | 任务类型 | temperature | top_p | top_k | min_p | presence_penalty | repetition_penalty |

|---|---|---|---|---|---|---|---|

| 思维模式 (Thinking) | 常规任务 | 1.0 | 0.95 | 20 | 0.0 | 1.5 | 1.0 |

| 思维模式 (Thinking) | 精确编码任务 (如 WebDev) | 0.6 | 0.95 | 20 | 0.0 | 0.0 | 1.0 |

| 指令模式 (Instruct) | 常规任务 | 0.7 | 0.8 | 20 | 0.0 | 1.5 | 1.0 |

| 指令模式 (Instruct) | 推理任务 | 1.0 | 1.0 | 40 | 0.0 | 2.0 | 1.0 |

注意:

对于支持的框架,您可以将presence_penalty参数调整在 0 到 2 之间,以减少无尽的重复。然而,使用较高的值偶尔会导致语言混合和模型性能略有下降。

Text-Only Input

from openai import OpenAI

# Configured by environment variables

client = OpenAI()

messages = [

{"role": "user", "content": "Type \"I love Qwen3.5\" backwards"},

]

chat_response = client.chat.completions.create(

model="Qwen/Qwen3.5-9B",

messages=messages,

max_tokens=81920,

temperature=1.0,

top_p=0.95,

presence_penalty=1.5,

extra_body={

"top_k": 20,

},

)

print("Chat response:", chat_response)

Image Input

from openai import OpenAI

# Configured by environment variables

client = OpenAI()

messages = [

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://qianwen-res.oss-accelerate.aliyuncs.com/Qwen3.5/demo/CI_Demo/mathv-1327.jpg"

}

},

{

"type": "text",

"text": "The centres of the four illustrated circles are in the corners of the square. The two big circles touch each other and also the two little circles. With which factor do you have to multiply the radii of the little circles to obtain the radius of the big circles?\nChoices:\n(A) $\\frac{2}{9}$\n(B) $\\sqrt{5}$\n(C) $0.8 \\cdot \\pi$\n(D) 2.5\n(E) $1+\\sqrt{2}$"

}

]

}

]

chat_response = client.chat.completions.create(

model="Qwen/Qwen3.5-9B",

messages=messages,

max_tokens=81920,

temperature=1.0,

top_p=0.95,

presence_penalty=1.5,

extra_body={

"top_k": 20,

},

)

print("Chat response:", chat_response)

Video Input

from openai import OpenAI

# Configured by environment variables

client = OpenAI()

messages = [

{

"role": "user",

"content": [

{

"type": "video_url",

"video_url": {

"url": "https://qianwen-res.oss-accelerate.aliyuncs.com/Qwen3.5/demo/video/N1cdUjctpG8.mp4"

}

},

{

"type": "text",

"text": "Summarize the video content."

}

]

}

]

# When vLLM is launched with `--media-io-kwargs '{"video": {"num_frames": -1}}'`,

# video frame sampling can be configured via `extra_body` (e.g., by setting `fps`).

# This feature is currently supported only in vLLM.

#

# By default, `fps=2` and `do_sample_frames=True`.

# With `do_sample_frames=True`, you can customize the `fps` value to set your desired video sampling rate.

chat_response = client.chat.completions.create(

model="Qwen/Qwen3.5-9B",

messages=messages,

max_tokens=81920,

temperature=1.0,

top_p=0.95,

presence_penalty=1.5,

extra_body={

"top_k": 20,

"mm_processor_kwargs": {"fps": 2, "do_sample_frames": True},

},

)

print("Chat response:", chat_response)

Instruct (or Non-Thinking) Mode

Qwen3.5 will think by default before response. You can obtain direct response from the model without thinking by configuring the API parameters.

from openai import OpenAI

# Configured by environment variables

client = OpenAI()

messages = [

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://qianwen-res.oss-accelerate.aliyuncs.com/Qwen3.5/demo/RealWorld/RealWorld-04.png"

}

},

{

"type": "text",

"text": "Where is this?"

}

]

}

]

chat_response = client.chat.completions.create(

model="Qwen/Qwen3.5-9B",

messages=messages,

max_tokens=32768,

temperature=0.7,

top_p=0.8,

presence_penalty=1.5,

extra_body={

"top_k": 20,

"chat_template_kwargs": {"enable_thinking": False},

},

)

print("Chat response:", chat_response)

Qwen3.5 excels in tool calling capabilities

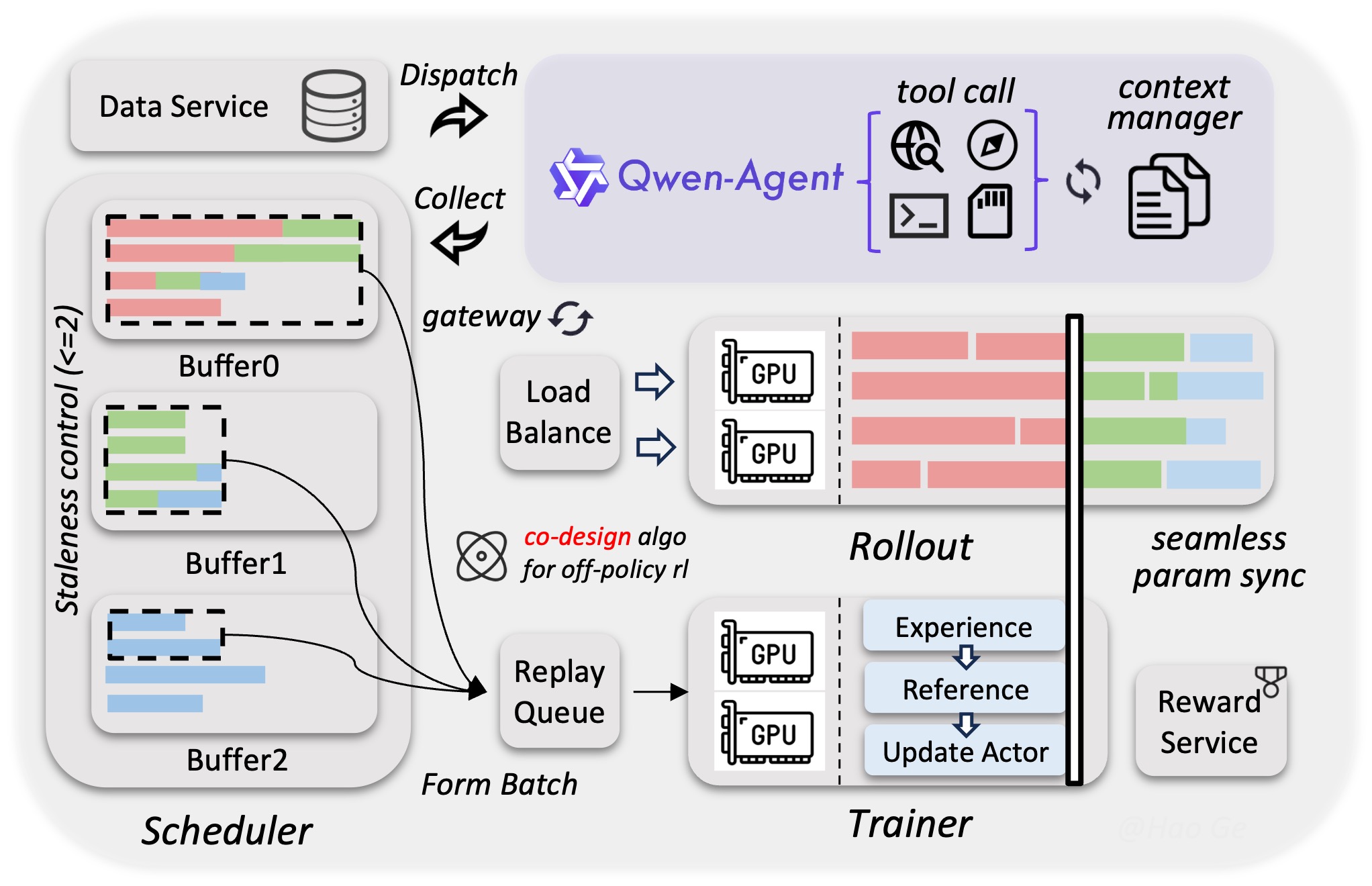

Qwen-Agent

We recommend using Qwen-Agent to quickly build Agent applications with Qwen3.5.

To define the available tools, you can use the MCP configuration file, use the integrated tool of Qwen-Agent, or integrate other tools by yourself.

import os

from qwen_agent.agents import Assistant

# Define LLM

# Using Alibaba Cloud Model Studio

llm_cfg = {

# Use the OpenAI-compatible model service provided by DashScope:

'model': 'Qwen3.5-9B',

'model_type': 'qwenvl_oai',

'model_server': 'https://dashscope.aliyuncs.com/compatible-mode/v1',

'api_key': os.getenv('DASHSCOPE_API_KEY'),

'generate_cfg': {

'use_raw_api': True,

# When using Dash Scope OAI API, pass the parameter of whether to enable thinking mode in this way

'extra_body': {

'enable_thinking': True

},

},

}

# Using OpenAI-compatible API endpoint.

# functionality of the deployment frameworks and let Qwen-Agent automate the related operations.

#

# llm_cfg = {

# # Use your own model service compatible with OpenAI API by vLLM/SGLang:

# 'model': 'Qwen/Qwen3.5-9B',

# 'model_type': 'qwenvl_oai',

# 'model_server': 'http://localhost:8000/v1', # api_base

# 'api_key': 'EMPTY',

#

# 'generate_cfg': {

# 'use_raw_api': True,

# # When using vLLM/SGLang OAI API, pass the parameter of whether to enable thinking mode in this way

# 'extra_body': {

# 'chat_template_kwargs': {'enable_thinking': True}

# },

# },

# }

# Define Tools

tools = [

{'mcpServers': { # You can specify the MCP configuration file

"filesystem": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-filesystem", "/Users/xxxx/Desktop"]

}

}

}

]

# Define Agent

bot = Assistant(llm=llm_cfg, function_list=tools)

# Streaming generation

messages = [{'role': 'user', 'content': 'Help me organize my desktop.'}]

for responses in bot.run(messages=messages):

pass

print(responses)

# Streaming generation

messages = [{'role': 'user', 'content': 'Develop a dog website and save it on the desktop'}]

for responses in bot.run(messages=messages):

pass

print(responses)

Processing Ultra-Long Texts

Qwen3.5 natively supports context lengths of up to 262,144 tokens. For long-horizon tasks where the total length (including both input and output) exceeds this limit, we recommend using RoPE scaling techniques to handle long texts effectively., e.g., YaRN.

YaRN is currently supported by several inference frameworks, e.g., transformers, vllm, ktransformers and sglang. In general, there are two approaches to enabling YaRN for supported frameworks:

-

Modifying the model configuration file: In the

config.jsonfile, change therope_parametersfields intext_configto:{ "mrope_interleaved": true, "mrope_section": [ 11, 11, 10 ], "rope_type": "yarn", "rope_theta": 10000000, "partial_rotary_factor": 0.25, "factor": 4.0, "original_max_position_embeddings": 262144, } -

Passing command line arguments:

use in vLLM:

VLLM_ALLOW_LONG_MAX_MODEL_LEN=1 vllm serve ... --hf-overrides '{"text_config": {"rope_parameters": {"mrope_interleaved": true, "mrope_section": [11, 11, 10], "rope_type": "yarn", "rope_theta": 10000000, "partial_rotary_factor": 0.25, "factor": 4.0, "original_max_position_embeddings": 262144}}}' --max-model-len 1010000

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)