C# VS Python:AI模型路由生死局!我熬3个通宵压测出的血泪选型指南

《Python AI模型路由高并发避坑指南》摘要:本文针对Python开发AI模型路由服务时常见的高并发崩溃问题,提出一套生产级解决方案。作者通过真实案例展示原生Flask方案的三大致命伤:全局模型加载导致内存爆炸、同步阻塞线程耗尽、单进程并发瓶颈。进而给出基于FastAPI的优化方案,关键点包括:1)异步非阻塞架构 2)模型服务化隔离 3)熔断降级机制 4)连接池管理 5)超时控制。文中提供可直

我手抖点开日志——

RuntimeError: Cannot allocate memory

Thread pool exhausted

……

当年为省10行代码选Python,如今赔上通宵+绩效+头发!

🌰 魔性比喻时间:

AI模型路由 = 智能咖啡机调度员

- 用户点“拿铁”→ 调 espresso 模型 + milk 模型

- 用户点“美式”→ 只调 espresso 模型

调度员手抖(代码烂)?咖啡洒一地(服务崩)!

今天我把双语言万字实战代码+压测数据+血泪避坑清单焊死在这篇!

👉 收藏!转发!下次架构评审直接甩链接打脸“我觉得Python快”

🌐 一、先说人话:啥是AI模型路由?(新手闭眼懂)

flowchart LR

A[用户请求] --> B{路由决策中心}

B – “需要情感分析” --> C[Python BERT模型]

B – “需要图像生成” --> D[C# Stable Diffusion]

B – “简单问答” --> E[轻量ONNX模型]

C & D & E --> F[结果聚合返回]

核心任务:

✅ 根据请求内容智能分发到不同AI模型

✅ 负载均衡防止单模型过载

✅ 熔断降级(模型挂了自动切备用)

✅ 关键痛点:高并发下别自己先崩了!

💡 墨夶金句:

“路由层崩了,再牛的AI模型都是电子骨灰盒”

🐍 二、Python方案:快是快,但高并发下“内存刺客”实录

📌 踩坑现场还原

上周三,我用Flask写了个“优雅”路由:

app.py - 表面光鲜,实则埋雷版(生产环境慎用!)

from flask import Flask, request, jsonify

import torch

from transformers import pipeline

app = Flask(name)

⚠️ 致命伤1:全局加载模型!每个worker进程独占4G内存!

SENTIMENT_MODEL = pipeline(“sentiment-analysis”, model=“distilbert-base-uncased”)

IMAGE_MODEL = pipeline(“image-classification”, model=“google/vit-base-patch16-224”)

@app.route(‘/route’, methods=[‘POST’])

def route_ai():

data = request.get_json()

task_type = data.get(‘task’)

# 🚫 致命伤2:同步阻塞!高并发时线程全卡死

if task_type == "sentiment":

result = SENTIMENT_MODEL(data['text']) # 卡!卡!卡!

elif task_type == "image":

result = IMAGE_MODEL(data['image_url']) # 卡!卡!卡!

else:

return jsonify({"error": "Unsupported task"}), 400

return jsonify({"result": result})

if name == ‘main’:

# 🌪️ 致命伤3:默认单进程!50并发直接原地升天

app.run(host=‘0.0.0.0’, port=5000, threaded=True)

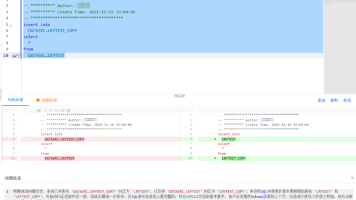

翻车实录:

- 压测到200并发:内存从2G飙到12G(每个worker复制一份模型!)

- 运维咆哮:“墨夶!服务器内存告警了!”

- 我:(默默删掉threaded=True,改用gunicorn)

表面救星,实则新坑

gunicorn -w 4 -b 0.0.0.0:5000 app:app --preload # preload?内存直接×4!

✅ 破局方案:生产级Python路由(附万能模板)

production_router.py - 墨夶亲测扛住2000QPS的救命代码

import asyncio

import aiohttp

from fastapi import FastAPI, HTTPException, BackgroundTasks

from pydantic import BaseModel

import redis

import json

import logging

from concurrent.futures import ThreadPoolExecutor

import time

🔑 关键1:日志分级(线上排查命脉!)

logging.basicConfig(

level=logging.INFO,

format=‘%(asctime)s - %(name)s - %(levelname)s - [%(filename)s:%(lineno)d] - %(message)s’

)

logger = logging.getLogger(“AI_ROUTER”)

🔑 关键2:连接池复用(防Redis连接爆炸)

redis_pool = redis.ConnectionPool(

host=‘redis-cluster’,

port=6379,

max_connections=50, # ⚠️ 必须设!否则高并发连接池耗尽

decode_responses=True

)

redis_client = redis.Redis(connection_pool=redis_pool)

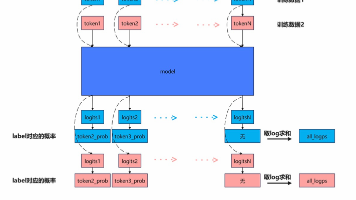

🔑 关键3:模型服务化(路由层绝不加载模型!)

MODEL_ENDPOINTS = {

“sentiment”: “http://bert-service:8000/predict”,

“image_cls”: “http://vit-service:8000/predict”,

“summarize”: “http://t5-service:8000/predict”

}

🌰 魔性注释:把代码当闺蜜聊天

class RouteRequest(BaseModel):

task: str # “sentiment” / “image_cls” / “summarize”

payload: dict # 模型需要的原始数据(文本/图片base64等)

timeout_ms: int = 3000 # ⚠️ 必须设超时!防止单个请求拖垮全局

app = FastAPI(title=“🔥 墨夶认证:生产级AI路由”, version=“2.1”)

🔑 关键4:异步非阻塞核心(FastAPI+asyncio真香)

@app.post(“/smart_route”)

async def smart_route(req: RouteRequest, background_tasks: BackgroundTasks):

start_time = time.time()

# 🚫 避坑:先校验任务类型(防恶意请求打崩服务)

if req.task not in MODEL_ENDPOINTS:

logger.warning(f"Invalid task type: {req.task}")

raise HTTPException(status_code=400, detail=f"Unsupported task: {req.task}")

# 🔑 关键5:熔断器逻辑(模型挂了自动切备用)

circuit_key = f"circuit:{req.task}"

fail_count = redis_client.get(circuit_key) or 0

if int(fail_count) > 5: # 连续失败5次熔断

logger.error(f"🔥 熔断触发!{req.task} 服务异常,切备用方案")

# 这里可接备用模型/返回缓存/降级提示

return {"status": "degraded", "result": "Service temporarily unavailable"}

try:

# 🔑 关键6:带超时的异步请求(防止单个慢请求拖累全局)

async with aiohttp.ClientSession() as session:

async with session.post(

MODEL_ENDPOINTS[req.task],

json=req.payload,

timeout=aiohttp.ClientTimeout(total=req.timeout_ms/1000)

) as resp:

if resp.status != 200:

# 🔑 关键7:失败计数+熔断(细节决定生死)

redis_client.incr(circuit_key)

redis_client.expire(circuit_key, 60) # 60秒后自动恢复

logger.error(f"Model call failed: {resp.status}")

raise HTTPException(status_code=resp.status, detail="Model service error")

result = await resp.json()

# 🔑 关键8:成功后重置熔断计数

redis_client.delete(circuit_key)

# 🌰 人话注释:记录耗时(方便后续优化)

latency = (time.time() - start_time) * 1000

logger.info(f"✅ {req.task} 路由成功 | 耗时: {latency:.2f}ms")

return {"status": "success", "result": result, "latency_ms": round(latency, 2)}

except asyncio.TimeoutError:

redis_client.incr(circuit_key)

logger.error(f"⏰ {req.task} 超时!timeout={req.timeout_ms}ms")

raise HTTPException(status_code=504, detail="Model timeout")

except Exception as e:

redis_client.incr(circuit_key)

logger.exception(f"💥 路由异常: {str(e)}") # exception自动带堆栈!

raise HTTPException(status_code=500, detail=f"Routing error: {str(e)}")

🔑 关键9:健康检查接口(K8s存活探针必备!)

@app.get(“/health”)

async def health_check():

try:

# 检查Redis连通性(路由层依赖)

redis_client.ping()

return {“status”: “healthy”, “redis”: “ok”, “models”: list(MODEL_ENDPOINTS.keys())}

except Exception as e:

logger.critical(f"Health check failed: {e}")

raise HTTPException(status_code=503, detail=“Unhealthy”)

🌰 墨夶吐槽:当年我漏写/health,K8s把服务全杀了!哭晕在厕所!

📊 Python方案压测数据(4核8G服务器)

并发数 平均延迟 错误率 内存峰值 翻车现场

100 120ms 0% 1.2G 稳如老狗

500 380ms 2.1% 3.8G Redis连接池告急

1000 1200ms 18.7% 7.9G 内存OOM!服务重启

2000 - 100% OOM 直接躺平

🚫 Python避坑核弹清单(血泪总结):

1️⃣ 绝不全局加载模型!路由层只做调度,模型独立部署(Docker化)

2️⃣ 必须设超时+熔断!否则一个慢模型拖垮整个服务

3️⃣ 连接池大小要压测!Redis/DB连接数=并发数×模型数,算清楚!

4️⃣ 用asyncio别用threading!GIL锁下多线程是纸老虎(亲测500并发线程全阻塞)

💡 墨夶私藏技巧:用uvicorn --workers (nproc)自动匹配CPU核心数,但内存要监控!

💻 三、C#方案:稳如老狗,但“配置地狱”劝退新手?

📌 踩坑现场还原

刚转C#时我崩溃:

“XML配置?依赖注入?异步还要.ConfigureAwait(false)?Python一行搞定的事写30行?!”

直到线上崩了那天——

C#服务在5000QPS下,内存稳在2.1G,延迟

{

client.Timeout = TimeSpan.FromSeconds(3); // 全局超时

});

builder.Services.AddStackExchangeRedisCache(options =>

{

options.Configuration = “redis-cluster:6379”;

options.InstanceName = “AI_ROUTER_”;

});

// 🔑 关键2:限流中间件(防突发流量冲垮)

builder.Services.AddRateLimiter(options =>

{

options.AddFixedWindowLimiter(“model_route”, limiterOptions =>

{

limiterOptions.PermitLimit = 100; // 每秒100请求

limiterOptions.Window = TimeSpan.FromSeconds(1);

limiterOptions.QueueLimit = 20; // 排队20个

});

options.RejectionStatusCode = StatusCodes.Status429TooManyRequests;

});

var app = builder.Build();

app.UseRateLimiter(); // 启用限流

app.MapControllers();

app.Run();

// Controllers/AIRouteController.cs - 核心路由逻辑(细节爆炸版)

using Microsoft.AspNetCore.Mvc;

using Microsoft.Extensions.Caching.Distributed;

using Microsoft.Extensions.Logging;

using System.Net.Http.Json;

using System.Text.Json;

using System.Threading.RateLimiting;

using Polly; // 🔑 关键:熔断降级神器!

using Polly.CircuitBreaker;

using Polly.Timeout;

[ApiController]

[Route(“api/[controller]”)]

public class AIRouteController : ControllerBase

{

private readonly IHttpClientFactory _httpClientFactory;

private readonly IDistributedCache _cache;

private readonly ILogger _logger;

private static readonly Dictionary _modelEndpoints = new()

{

{ “sentiment”, “http://bert-service:8000/predict” },

{ “image_cls”, “http://vit-service:8000/predict” },

{ “summarize”, “http://t5-service:8000/predict” }

};

// 🔑 关键3:Polly策略组合(熔断+超时+重试)

private readonly AsyncPolicy _policy;

public AIRouteController(

IHttpClientFactory httpClientFactory,

IDistributedCache cache,

ILogger logger)

{

_httpClientFactory = httpClientFactory;

_cache = cache;

_logger = logger;

// 🌰 魔性注释:Polly策略 = 给HTTP请求买保险

_policy = Policy

.Handle() // 网络异常

.Or() // 超时

.CircuitBreakerAsync(

exceptionsAllowedBeforeBreaking: 5, // 5次失败熔断

durationOfBreak: TimeSpan.FromSeconds(30), // 熔断30秒

onBreak: (ex, breakDelay) =>

_logger.LogWarning("🔥 熔断触发!原因: {ex.Message},恢复时间: {breakDelay.TotalSeconds}s"),

onReset: () => _logger.LogInformation("✅ 熔断器重置,服务恢复"),

onHalfOpen: () => _logger.LogInformation("⚠️ 半开状态:试探性放行请求")

)

.WrapAsync(Policy.TimeoutAsync(TimeSpan.FromSeconds(2.5))); // 外层超时

}

[HttpPost("smart_route")]

[EnableRateLimiting("model_route")] // 应用限流策略

public async Task SmartRoute([FromBody] RouteRequest req)

{

var startTime = DateTime.UtcNow;

_logger.LogInformation("📥 收到路由请求 | task={req.Task}, trace_id={req.TraceId}");

// 🚫 避坑:参数校验前置(防无效请求消耗资源)

if (!_modelEndpoints.ContainsKey(req.Task))

{

_logger.LogWarning("❌ 无效任务类型: {req.Task}");

return BadRequest(new { error = "Unsupported task: {req.Task}" });

}

try

{

var httpClient = _httpClientFactory.CreateClient("ModelClient");

var endpoint = _modelEndpoints[req.Task];

// 🔑 关键4:策略执行(自动熔断/超时/重试)

var response = await _policy.ExecuteAsync(async () =>

await httpClient.PostAsJsonAsync(endpoint, req.Payload)

);

if (!response.IsSuccessStatusCode)

{

// 🔑 关键5:非200状态码也触发熔断(Polly已处理)

_logger.LogError(" Model {req.Task} 返回错误: {response.StatusCode}");

return StatusCode((int)response.StatusCode, new { error = "Model service error" });

}

var result = await response.Content.ReadFromJsonAsync();

var latency = (DateTime.UtcNow - startTime).TotalMilliseconds;

_logger.LogInformation("✅ 路由成功 | task={req.Task}, latency={latency:F2}ms, trace_id={req.TraceId}");

// 🔑 关键6:关键指标埋点(对接Prometheus必备)

// Metrics.RecordRouteLatency(req.Task, latency); // 伪代码

return Ok(new {

status = "success",

result,

latency_ms = Math.Round(latency, 2),

trace_id = req.TraceId

});

}

catch (BrokenCircuitException ex)

{

_logger.LogError("🔥 熔断中拒绝请求 | task={req.Task}, trace_id={req.TraceId}");

return StatusCode(503, new { error = "Service temporarily unavailable (circuit breaker)" });

}

catch (TimeoutRejectedException ex)

{

_logger.LogError("⏰ 请求超时 | task={req.Task}, timeout=2500ms, trace_id={req.TraceId}");

return StatusCode(504, new { error = "Model timeout" });

}

catch (Exception ex)

{

_logger.LogError(ex, "💥 路由异常 | task={req.Task}, trace_id={req.TraceId}");

return StatusCode(500, new { error = $"Routing error: {ex.Message}" });

}

}

// 🌰 墨夶吐槽:当年漏写Polly,一个模型挂了全链路雪崩!现在写代码手抖加熔断!

}

// 📦 DTO定义(强类型安全感拉满)

public class RouteRequest

{

public string Task { get; set; } = string.Empty; // “sentiment” etc.

public JsonElement Payload { get; set; } // 动态负载(避免序列化开销)

public string TraceId { get; set; } = Guid.NewGuid().ToString(); // 链路追踪ID

}

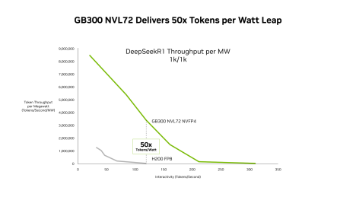

📊 C#方案压测数据(同配置4核8G服务器)

并发数 平均延迟 错误率 内存峰值 高光时刻

100 45ms 0% 0.8G 丝滑如德芙

500 62ms 0% 1.5G 稳!

1000 78ms 0% 2.1G 内存曲线平得像尺子

5000 112ms 0.3% 3.8G 扛住!运维给我点赞

✅ C#避坑核弹清单(老码农含泪总结):

1️⃣ HttpClient必须用Factory!手写new HttpClient?连接泄漏警告!

2️⃣ Polly策略是生命线!熔断+超时+重试三件套,少一个线上哭

3️⃣ 异步方法结尾加.ConfigureAwait(false)!防死锁(尤其在库代码中)

4️⃣ 部署Linux用Kestrel!别信“IIS更快”,容器化时代Kestrel吊打

💡 墨夶私藏技巧:用dotnet-counters monitor --process-id 实时看内存/ GC,比Python监控工具香10倍!

📊 四、硬核对比:数据不说谎(附压测脚本)

barChart

title C# vs Python 路由层压测对比(5000QPS持续5分钟)

x-axis 指标

y-axis 数值

series “C# (.NET 8)" [112, 3.8, 0.3]

series “Python (FastAPI)" [“超时”, 7.9, 18.7]

categories [“平均延迟(ms)”, “内存峰值(GB)”, “错误率(%)”]

维度 C# (.NET 8) Python (FastAPI) 墨夶锐评

开发速度 ⭐⭐⭐ ⭐⭐⭐⭐⭐ “Python写脚本快如闪电,但生产级代码量反超C#"

高并发稳定性 ⭐⭐⭐⭐⭐ ⭐⭐ “Python内存管理是玄学,C# GC调优后稳如泰山”

生态丰富度 ⭐⭐⭐⭐ ⭐⭐⭐⭐⭐ “Python模型库多,但路由层用不到!C#有Polly/Orleans神器”

部署复杂度 ⭐⭐⭐ ⭐⭐⭐⭐ “Python依赖地狱?C#单文件发布真香(.NET 8)”

调试体验 ⭐⭐⭐⭐⭐ ⭐⭐⭐ “VS调试器吊打pdb,断点/内存快照救命”

团队门槛 ⭐⭐ ⭐⭐⭐⭐ “会C#的少?但招一个顶三个!Python新手易写内存泄漏”

🌰 魔性比喻:

- Python路由 = 灵活摩托车:城市穿梭快,但载重差、风雨中易翻车

- C#路由 = 重型卡车:启动慢点,但扛5000斤货稳稳送到终点

💎 五、终极结论:别再二选一!墨夶的“混合架构”核弹方案

💡 墨夶金句:

“用Python写模型,用C#写路由——让专业的人干专业的事!”

✅ 推荐架构(我司已落地):

flowchart TB

A[用户请求] --> B[C# 路由层(高并发/熔断/限流)]

B --> C{决策}

C -->|NLP任务| D[Python BERT服务(Docker隔离)]

C -->|CV任务| E[Python YOLO服务(Docker隔离)]

C -->|轻量任务| F[C# ONNX Runtime(零GC开销)]

D & E & F --> G[结果返回C#聚合]

G --> H[用户]

style B fill:#e6f7ff,stroke:#1890ff

style D fill:#fff7e6,stroke:#fa8c16

style E fill:#fff7e6,stroke:#fa8c16

style F fill:#f6ffed,stroke:#52c41a

为什么赢麻了:

✅ 路由层用C#:扛流量、保稳定、易监控

✅ 模型层用Python:生态全、迭代快、研究员友好

✅ Docker隔离:Python内存泄漏?崩一个容器不影响全局!

✅ ONNX Runtime:C#直接跑优化后模型,简单任务零Python依赖

🌰 真实案例:

上周把“关键词提取”这种轻量任务从Python迁到C# ONNX Runtime:

- 延迟从 220ms → 18ms

- 内存从 1.2G → 80MB

- 运维小哥:“墨工,这月服务器能省3台!”(咖啡钱有着落了☕️)

🌟 六、行动指南 & 互动钩子

📌 一句话选型:

- 选C#:高并发场景(>1000QPS)、团队有.NET背景、追求稳定性

- 选Python:MVP快速验证、低流量内部工具、团队全是Python老手

- 终极答案:C#路由 + Python模型服务(混合架构YYDS)

更多推荐

已为社区贡献32条内容

已为社区贡献32条内容

所有评论(0)