Java 程序员第 13 阶段:大模型多轮会话开发,实现上下文记忆对话功能

log.info("WebSocket连接关闭: sessionId={}, status={}", session.getId(), status);log.error("多轮对话失败: userId={}, sessionId={}", userId, sessionId, e);log.info("删除用户所有会话: userId={}, count={}", userId, keys.si

引言

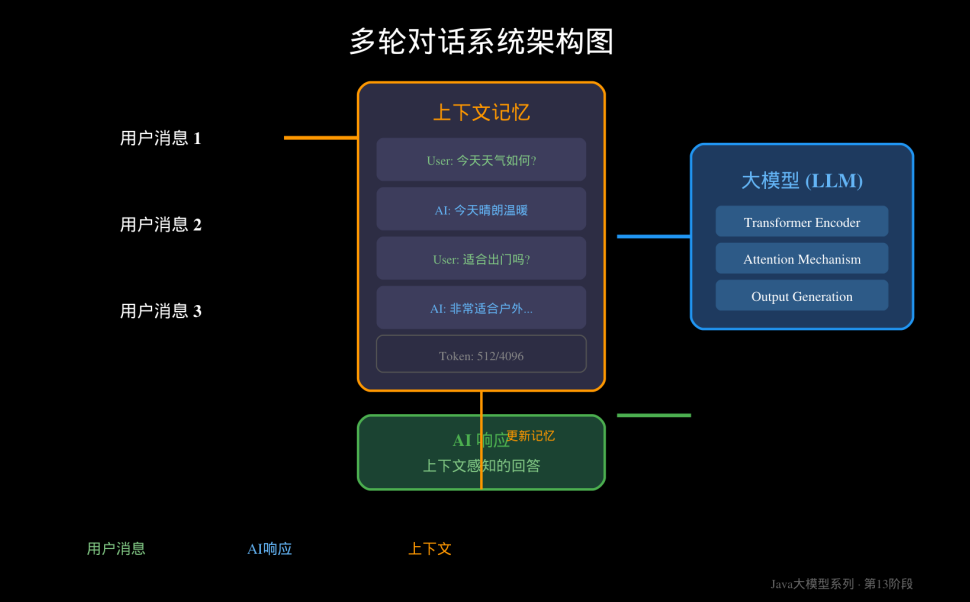

在实际的AI应用开发中,单轮问答往往无法满足复杂业务场景的需求。用户与AI的对话通常是连续、有上下文的——前一轮的讨论内容会直接影响后续的回答。这种"记性"能力,就是多轮会话(Multi-Turn Conversation)的核心价值。

本文将深入讲解如何在Java项目中实现大模型的多轮会话功能,涵盖上下文管理、Redis会话存储、Token计算与截断策略等核心知识点,并提供完整的Spring Boot实战代码。

一、为什么需要多轮会话

1.1 单轮vs多轮对话对比

|

特性 |

单轮对话 |

多轮会话 |

|

上下文感知 |

无,每次独立 |

有,依赖历史 |

|

适用场景 |

简单查询 |

复杂任务 |

|

用户体验 |

机械感强 |

流畅自然 |

|

实现复杂度 |

低 |

中高 |

|

Token消耗 |

固定 |

累积增长 |

1.2 典型业务场景

// 单轮对话示例

// 用户:今天天气如何?

// AI:今天晴朗,25度。

// 多轮对话示例

// 用户:今天天气如何?

// AI:今天晴朗,25度。

// 用户:适合出门跑步吗? ← 需要理解"今天"的上下文

// AI:非常适合!建议早上或傍晚跑步,避开中午高温。

1.3 技术挑战

多轮会话面临三大核心挑战:

1. 上下文长度限制:大模型有Token上下文窗口上限(如GPT-4o是128K,通义千问2.5是32K)

2. 成本控制:Token数量直接影响API调用成本

3. 会话状态管理:用户历史消息的存储、查询、过期处理

二、上下文管理策略

2.1 四种核心策略对比

策略一:全量历史传递

/**

* 全量历史方式 - 简单但有Token限制风险

*/

public class FullHistoryContext {

private final List<ChatMessage> messages = new ArrayList<>();

private static final int MAX_CONTEXT_TOKENS = 4096;

public void addMessage(String role, String content) {

messages.add(new ChatMessage(role, content));

}

public String buildContext() {

StringBuilder sb = new StringBuilder();

int totalTokens = 0;

for (ChatMessage msg : messages) {

int msgTokens = estimateTokens(msg.getContent());

if (totalTokens + msgTokens > MAX_CONTEXT_TOKENS) {

break; // 超限停止添加

}

sb.append(msg.toString()).append("\n");

totalTokens += msgTokens;

}

return sb.toString();

}

private int estimateTokens(String text) {

// 粗略估算:中文约2字符=1token

return text.length() / 2;

}

}

优点:完整保留上下文,逻辑简单

缺点:超出Token限制会导致截断或报错

策略二:滑动窗口裁剪

/**

* 滑动窗口方式 - 保留最近N条消息

*/

@Service

public class SlidingWindowContext {

private static final int WINDOW_SIZE = 10; // 保留最近10条

public List<ChatMessage> getContextMessages(List<ChatMessage> fullHistory) {

if (fullHistory.size() <= WINDOW_SIZE) {

return new ArrayList<>(fullHistory);

}

// 返回最近WINDOW_SIZE条消息

return fullHistory.subList(

fullHistory.size() - WINDOW_SIZE,

fullHistory.size()

);

}

}

适用场景:一般对话,消息密度适中

策略三:摘要压缩

/**

* 摘要压缩方式 - 用LLM总结历史,保留核心信息

*/

@Service

public class SummaryCompressionContext {

private final OpenAIAsyncClient aiClient;

public String compressHistory(List<ChatMessage> history, String userQuery) {

// 构建摘要请求

String summaryPrompt = String.format("""

请将以下对话历史压缩为简洁的摘要,保留与"%s"相关的重要信息。

对话历史:

%s

摘要要求:

1. 不超过200字

2. 保留关键实体、偏好、已完成的任务

3. 用中文回复

""",

userQuery,

formatHistory(history)

);

// 调用LLM生成摘要

return aiClient.chat(model -> model

.messages(List.of(Message.of(Role.USER, summaryPrompt)))

.maxTokens(300)

).join().content();

}

private String formatHistory(List<ChatMessage> history) {

return history.stream()

.map(m -> m.getRole() + ": " + m.getContent())

.collect(Collectors.joining("\n"));

}

}

适用场景:长对话,需要高效利用Token

策略四:向量检索召回

/**

* 向量检索方式 - 召回与当前Query语义相关的历史

*/

@Service

public class VectorRetrievalContext {

private final MilvusClient milvusClient;

private final EmbeddingClient embeddingClient;

public List<ChatMessage> retrieveRelevantHistory(

String userQuery,

String sessionId,

int topK) {

// 1. 将用户问题转为向量

Embedding queryEmbedding = embeddingClient.embed(userQuery);

// 2. 在该会话的历史向量中检索

SearchParams searchParams = SearchParams.builder()

.metricType(MetricType.IP)

.topK(topK)

.build();

SearchResults results = milvusClient.search(

SearchRequest.builder()

.collectionName("chat_history")

.searchParams(searchParams)

.vectors(List.of(queryEmbedding.getVector()))

.filter("session_id = '" + sessionId + "'")

.build()

);

// 3. 返回相关性最高的N条历史

return results.getResults().stream()

.map(this::toChatMessage)

.collect(Collectors.toList());

}

}

适用场景:知识密集型对话,需要精准回忆相关信息

2.2 策略选择建议

|

场景 |

推荐策略 |

|

短对话(<20轮) |

滑动窗口 |

|

长对话(>50轮) |

摘要压缩 |

|

知识问答 |

向量检索 |

|

简单助手 |

固定窗口 |

三、Token计算与截断

3.1 主流模型Token限制

|

模型 |

上下文窗口 |

特点 |

|

GPT-4o |

128K tokens |

超大上下文 |

|

Claude 3.5 Sonnet |

200K tokens |

最大上下文 |

|

通义千问2.5 |

32K tokens |

性价比高 |

|

DeepSeek V3 |

64K tokens |

国产优选 |

3.2 Token计算实现

/**

* TikToken风格Token计算器

* 推荐使用: com.theokanning.openai-tiktoken:tiktoken-jvm

*/

@Service

public class TokenCalculator {

// 推荐的tiktoken库

// <dependency>

// <groupId>com.theokanning.openai-tiktoken</groupId>

// <artifactId>tiktoken-jvm</artifactId>

// <version>0.5.1</version>

// </dependency>

private final TikToken tokenizer;

public TokenCalculator() {

// 加载GPT-4 tokenizer

this.tokenizer = TikTokenizer.getEncoding("cl100k_base");

}

/**

* 计算文本的Token数量

*/

public int countTokens(String text) {

if (text == null || text.isEmpty()) {

return 0;

}

return tokenizer.encode(text).size();

}

/**

* 计算消息列表的总Token数(包含格式开销)

*/

public int countMessagesTokens(List<ChatMessage> messages) {

int total = 0;

// 每个消息有格式开销: role + content + separators ≈ 4 tokens

for (ChatMessage msg : messages) {

total += countTokens(msg.getContent()) + 4;

}

// 额外的completion overhead

total += 3;

return total;

}

/**

* 粗略估算方法(无外部依赖时使用)

*/

public int roughEstimate(String text) {

if (text == null) return 0;

// 中文约0.5字符/token,英文约0.25字符/token

int chineseChars = (int) text.chars().filter(c -> c > 0x4E00 && c < 0x9FA5).count();

int otherChars = text.length() - chineseChars;

return chineseChars / 2 + otherChars / 4;

}

}

3.3 智能截断策略

/**

* 多轮会话截断器

* 优先保留System Prompt和最近对话,中间部分智能裁剪

*/

@Service

public class ConversationTruncator {

private final TokenCalculator tokenCalculator;

private static final int RESERVED_TOKENS = 500; // 为回复预留空间

/**

* 智能截断,保持头尾消息

*/

public List<ChatMessage> truncate(

List<ChatMessage> messages,

int maxTokens,

String systemPrompt) {

int systemTokens = tokenCalculator.countTokens(systemPrompt);

int availableTokens = maxTokens - systemTokens - RESERVED_TOKENS;

List<ChatMessage> result = new ArrayList<>();

result.add(new ChatMessage("system", systemPrompt));

// 计算现有Token

int currentTokens = tokenCalculator.countMessagesTokens(messages);

if (currentTokens <= availableTokens) {

// 不需要截断

result.addAll(messages);

return result;

}

// 策略:头尾保留,中间裁剪

int keepHeadCount = Math.min(2, messages.size() / 3);

int keepTailCount = Math.min(5, messages.size() / 2);

List<ChatMessage> head = messages.subList(0, keepHeadCount);

List<ChatMessage> tail = messages.subList(messages.size() - keepTailCount, messages.size());

int headTokens = tokenCalculator.countMessagesTokens(head);

int tailTokens = tokenCalculator.countMessagesTokens(tail);

int usedTokens = headTokens + tailTokens;

if (usedTokens <= availableTokens) {

result.addAll(head);

result.add(new ChatMessage("system", "[已省略部分历史消息...]"));

result.addAll(tail);

} else {

// 空间不足,只保留尾部

result.add(new ChatMessage("system", "[已省略早期对话...]"));

int remainTokens = availableTokens;

List<ChatMessage> newTail = new ArrayList<>();

for (int i = tail.size() - 1; i >= 0; i--) {

int msgTokens = tokenCalculator.countTokens(tail.get(i).getContent());

if (remainTokens - msgTokens >= 0) {

newTail.add(0, tail.get(i));

remainTokens -= msgTokens;

} else {

break;

}

}

result.addAll(newTail);

}

return result;

}

}

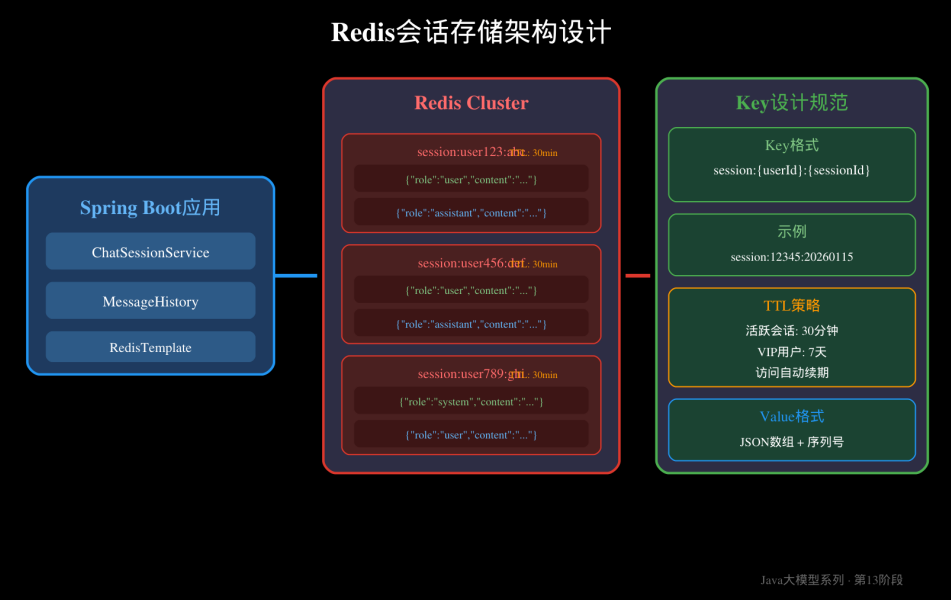

四、Redis会话存储设计

4.1 Key设计规范

session:{userId}:{sessionId}

|

组成部分 |

说明 |

示例 |

|

session |

前缀,固定 |

session |

|

userId |

用户ID |

12345 |

|

sessionId |

会话ID(可选) |

abc-def-ghi |

@Configuration

public class RedisKeyDesign {

private static final String SESSION_KEY_PREFIX = "session:";

private static final String SESSION_LOCK_PREFIX = "session_lock:";

/**

* 生成会话Key

*/

public String generateSessionKey(String userId, String sessionId) {

return SESSION_KEY_PREFIX + userId + ":" + sessionId;

}

/**

* 生成分布式锁Key(防止并发操作同一会话)

*/

public String generateLockKey(String userId, String sessionId) {

return SESSION_LOCK_PREFIX + userId + ":" + sessionId;

}

}

4.2 会话数据结构

/**

* 对话消息结构

*/

@Data

@Builder

public class ChatMessage {

private String role; // system, user, assistant

private String content; // 消息内容

private long timestamp; // 时间戳

private String messageId; // 消息唯一ID

public String toJson() {

return JsonUtil.toJson(this);

}

public static ChatMessage fromJson(String json) {

return JsonUtil.fromJson(json, ChatMessage.class);

}

}

/**

* 会话数据结构

*/

@Data

@Builder

public class ChatSession {

private String sessionId;

private String userId;

private List<ChatMessage> messages;

private long createdAt;

private long updatedAt;

private int messageCount;

}

4.3 Redis存储服务

/**

* Redis会话存储服务

*/

@Service

public class RedisChatSessionService {

private final StringRedisTemplate redisTemplate;

private final RedisKeyDesign keyDesign;

private final ObjectMapper objectMapper;

// 默认TTL

private static final Duration DEFAULT_TTL = Duration.ofMinutes(30);

// VIP用户TTL

private static final Duration VIP_TTL = Duration.ofDays(7);

/**

* 保存消息到会话

*/

public void addMessage(String userId, String sessionId, ChatMessage message) {

String key = keyDesign.generateSessionKey(userId, sessionId);

// 使用分布式锁保证并发安全

String lockKey = keyDesign.generateLockKey(userId, sessionId);

try (RLock lock = redissonClient.getLock(lockKey)) {

lock.lock(5, TimeUnit.SECONDS);

// 获取现有消息

List<ChatMessage> messages = getMessages(userId, sessionId);

messages.add(message);

// 保存回Redis

redisTemplate.opsForValue().set(

key,

objectMapper.writeValueAsString(messages),

getTTL(userId)

);

// 更新会话元数据

updateSessionMetadata(userId, sessionId);

} catch (Exception e) {

log.error("保存消息失败: userId={}, sessionId={}", userId, sessionId, e);

throw new RuntimeException("保存消息失败", e);

}

}

/**

* 获取会话消息

*/

public List<ChatMessage> getMessages(String userId, String sessionId) {

String key = keyDesign.generateSessionKey(userId, sessionId);

String json = redisTemplate.opsForValue().get(key);

if (json == null || json.isEmpty()) {

return new ArrayList<>();

}

try {

ChatMessage[] messages = objectMapper.readValue(json, ChatMessage[].class);

return Arrays.asList(messages);

} catch (Exception e) {

log.error("解析消息失败: {}", json, e);

return new ArrayList<>();

}

}

/**

* 获取带Token计数的上下文

*/

public ContextWithTokens getContextWithTokens(

String userId,

String sessionId,

int maxTokens) {

List<ChatMessage> messages = getMessages(userId, sessionId);

List<ChatMessage> truncated = truncateMessages(messages, maxTokens);

return ContextWithTokens.builder()

.messages(truncated)

.totalTokens(calculateTokens(messages))

.remainingTokens(maxTokens - calculateTokens(truncated))

.isTruncated(messages.size() != truncated.size())

.build();

}

/**

* 删除会话

*/

public void deleteSession(String userId, String sessionId) {

String key = keyDesign.generateSessionKey(userId, sessionId);

redisTemplate.delete(key);

log.info("删除会话: userId={}, sessionId={}", userId, sessionId);

}

/**

* 批量删除用户的所有会话

*/

public void deleteAllUserSessions(String userId) {

String pattern = keyDesign.generateSessionKey(userId, "*");

Set<String> keys = redisTemplate.keys(pattern);

if (keys != null && !keys.isEmpty()) {

redisTemplate.delete(keys);

log.info("删除用户所有会话: userId={}, count={}", userId, keys.size());

}

}

private Duration getTTL(String userId) {

// 可以根据用户等级设置不同的TTL

return DEFAULT_TTL;

}

private void updateSessionMetadata(String userId, String sessionId) {

String metaKey = "session_meta:" + userId + ":" + sessionId;

Map<String, Object> meta = new HashMap<>();

meta.put("updatedAt", System.currentTimeMillis());

redisTemplate.opsForHash().putAll(metaKey, meta);

redisTemplate.expire(metaKey, DEFAULT_TTL);

}

}

4.4 访问自动续期

/**

* 会话访问自动续期拦截器

*/

@Component

public class SessionAccessInterceptor implements HandlerInterceptor {

private final RedisChatSessionService sessionService;

@Override

public void afterCompletion(HttpServletRequest request,

HttpServletResponse response,

Object handler,

Exception ex) {

// 请求完成后,自动续期当前访问的会话

String userId = getUserId(request);

String sessionId = getSessionId(request);

if (userId != null && sessionId != null) {

sessionService.refreshTTL(userId, sessionId);

}

}

}

五、Spring Boot集成实战

5.1 完整服务实现

/**

* 多轮会话服务

*/

@Service

@Slf4j

public class MultiTurnChatService {

private final RedisChatSessionService sessionService;

private final TokenCalculator tokenCalculator;

private final ConversationTruncator truncator;

private final ChatGPTClient chatClient;

private static final int DEFAULT_MAX_TOKENS = 4096;

private static final String SYSTEM_PROMPT = """

你是一个专业的技术助手,擅长解答Java开发相关问题。

请用简洁专业的语言回答,保持对话的连贯性。

""";

/**

* 发送多轮对话

*/

public ChatResponse chat(String userId, String sessionId, String userMessage) {

long startTime = System.currentTimeMillis();

try {

// 1. 保存用户消息

sessionService.addMessage(userId, sessionId,

ChatMessage.userMessage(userMessage));

// 2. 获取上下文(带Token控制)

ContextWithTokens context = sessionService.getContextWithTokens(

userId, sessionId, DEFAULT_MAX_TOKENS);

// 3. 构建请求

List<Message> apiMessages = buildApiMessages(context.getMessages());

// 4. 调用大模型

String aiResponse = chatClient.chat(apiMessages);

// 5. 保存AI响应

sessionService.addMessage(userId, sessionId,

ChatMessage.assistantMessage(aiResponse));

// 6. 构建响应

return ChatResponse.builder()

.message(aiResponse)

.tokensUsed(context.getTotalTokens())

.remainingTokens(context.getRemainingTokens())

.isTruncated(context.isTruncated())

.cost(calculateCost(context.getTotalTokens()))

.latencyMs(System.currentTimeMillis() - startTime)

.build();

} catch (Exception e) {

log.error("多轮对话失败: userId={}, sessionId={}", userId, sessionId, e);

throw new RuntimeException("AI回复失败: " + e.getMessage(), e);

}

}

private List<Message> buildApiMessages(List<ChatMessage> history) {

List<Message> messages = new ArrayList<>();

messages.add(Message.of(Role.SYSTEM, SYSTEM_PROMPT));

for (ChatMessage msg : history) {

Role role = "user".equals(msg.getRole()) ? Role.USER : Role.ASSISTANT;

messages.add(Message.of(role, msg.getContent()));

}

return messages;

}

private double calculateCost(int tokens) {

// GPT-4o价格: $0.005/1K input tokens

return tokens / 1000.0 * 0.005;

}

}

/**

* 控制器

*/

@RestController

@RequestMapping("/api/chat")

public class ChatController {

private final MultiTurnChatService chatService;

@PostMapping("/send")

public ApiResult<ChatResponse> send(

@RequestHeader("X-User-Id") String userId,

@RequestParam(defaultValue = "default") String sessionId,

@RequestBody ChatRequest request) {

ChatResponse response = chatService.chat(userId, sessionId, request.getMessage());

return ApiResult.success(response);

}

@GetMapping("/history")

public ApiResult<List<ChatMessage>> getHistory(

@RequestHeader("X-User-Id") String userId,

@RequestParam(defaultValue = "default") String sessionId) {

List<ChatMessage> messages = sessionService.getMessages(userId, sessionId);

return ApiResult.success(messages);

}

@DeleteMapping("/session")

public ApiResult<Void> deleteSession(

@RequestHeader("X-User-Id") String userId,

@RequestParam String sessionId) {

sessionService.deleteSession(userId, sessionId);

return ApiResult.success();

}

}

5.2 配置类

# application.yml

spring:

data:

redis:

host: localhost

port: 6379

password: ${REDIS_PASSWORD:}

database: 0

timeout: 5000ms

redisson:

config: |

clusterServersConfig:

nodeAddresses:

- "redis://127.0.0.1:6379"

chat:

session:

default-ttl-minutes: 30

max-messages-per-session: 1000

max-tokens-per-request: 4096

ai:

model: gpt-4o

api-key: ${OPENAI_API_KEY}

base-url: https://api.openai.com/v1

timeout-seconds: 60

@Configuration

@ConfigurationProperties(prefix = "chat")

public class ChatProperties {

private SessionConfig session = new SessionConfig();

private AiConfig ai = new AiConfig();

@Data

public static class SessionConfig {

private int defaultTtlMinutes = 30;

private int maxMessagesPerSession = 1000;

private int maxTokensPerRequest = 4096;

}

@Data

public static class AiConfig {

private String model = "gpt-4o";

private String apiKey;

private String baseUrl = "https://api.openai.com/v1";

private int timeoutSeconds = 60;

}

}

六、WebSocket实时通信

6.1 WebSocket配置

@Configuration

@EnableWebSocket

public class WebSocketConfig implements WebSocketConfigurer {

@Override

public void registerWebSocketHandlers(WebSocketHandlerRegistry registry) {

registry.addHandler(chatWebSocketHandler(), "/ws/chat")

.setAllowedOrigins("*");

}

@Bean

public ChatWebSocketHandler chatWebSocketHandler() {

return new ChatWebSocketHandler();

}

}

6.2 WebSocket处理器

@Component

public class ChatWebSocketHandler extends TextWebSocketHandler {

private final MultiTurnChatService chatService;

private final ConcurrentHashMap<String, WebSocketSession> sessions = new ConcurrentHashMap<>();

@Override

public void afterConnectionEstablished(WebSocketSession session) {

sessions.put(session.getId(), session);

log.info("WebSocket连接建立: sessionId={}", session.getId());

}

@Override

protected void handleTextMessage(WebSocketSession session, TextMessage message) {

try {

ChatWebSocketRequest request = JsonUtil.fromJson(

message.getPayload(), ChatWebSocketRequest.class);

// 异步处理

CompletableFuture.runAsync(() -> {

try {

ChatResponse response = chatService.chat(

request.getUserId(),

request.getSessionId(),

request.getMessage()

);

// 发送响应

session.sendMessage(new TextMessage(

JsonUtil.toJson(response)

));

} catch (Exception e) {

session.sendMessage(new TextMessage(

JsonUtil.toJson(ApiResult.error(e.getMessage()))

));

}

});

} catch (Exception e) {

log.error("WebSocket消息处理失败", e);

}

}

@Override

public void afterConnectionClosed(WebSocketSession session, CloseStatus status) {

sessions.remove(session.getId());

log.info("WebSocket连接关闭: sessionId={}, status={}", session.getId(), status);

}

}

6.3 前端连接示例

// WebSocket客户端示例

class ChatWebSocket {

constructor(userId, sessionId) {

this.userId = userId;

this.sessionId = sessionId;

this.ws = null;

this.messageCallback = null;

}

connect() {

this.ws = new WebSocket(`ws://localhost:8080/ws/chat`);

this.ws.onopen = () => {

console.log('WebSocket已连接');

};

this.ws.onmessage = (event) => {

const response = JSON.parse(event.data);

if (this.messageCallback) {

this.messageCallback(response);

}

};

this.ws.onerror = (error) => {

console.error('WebSocket错误:', error);

};

this.ws.onclose = () => {

console.log('WebSocket已断开,5秒后重连...');

setTimeout(() => this.connect(), 5000);

};

}

send(message) {

if (this.ws && this.ws.readyState === WebSocket.OPEN) {

this.ws.send(JSON.stringify({

userId: this.userId,

sessionId: this.sessionId,

message: message

}));

}

}

onMessage(callback) {

this.messageCallback = callback;

}

disconnect() {

if (this.ws) {

this.ws.close();

}

}

}

七、性能优化与最佳实践

7.1 缓存优化

/**

* 热点会话缓存

*/

@Service

public class SessionCacheService {

private final Cache<String, List<ChatMessage>> sessionCache;

public SessionCacheService() {

// 使用Caffeine缓存热点会话

this.sessionCache = Caffeine.newBuilder()

.maximumSize(10000)

.expireAfterWrite(Duration.ofMinutes(5))

.recordStats()

.build();

}

public List<ChatMessage> getCachedSession(String key) {

return sessionCache.getIfPresent(key);

}

public void cacheSession(String key, List<ChatMessage> messages) {

sessionCache.put(key, messages);

}

public void invalidate(String key) {

sessionCache.invalidate(key);

}

public CacheStats getStats() {

return sessionCache.stats();

}

}

7.2 并发控制

/**

* 会话并发控制器 - 防止同一用户多端并发导致的消息乱序

*/

@Service

public class SessionConcurrencyControl {

private final ConcurrentHashMap<String, Semaphore> userSemaphores = new ConcurrentHashMap<>();

/**

* 获取用户的信号量

*/

public Semaphore getUserSemaphore(String userId) {

return userSemaphores.computeIfAbsent(userId, k -> new Semaphore(1));

}

/**

* 执行带并发控制的消息处理

*/

public <T> T executeWithLock(String userId, String sessionId, Supplier<T> action) {

Semaphore semaphore = getUserSemaphore(userId);

semaphore.acquireUninterruptibly();

try {

return action.get();

} finally {

semaphore.release();

}

}

}

7.3 监控指标

/**

* 多轮会话监控

*/

@Component

public class ChatMetrics {

private final MeterRegistry meterRegistry;

public ChatMetrics(MeterRegistry meterRegistry) {

this.meterRegistry = meterRegistry;

// 注册 gauges

Gauge.builder("chat.active.sessions", sessionCache, c -> c.estimatedSize())

.register(meterRegistry);

}

public void recordChatRequest(String userId, int tokensUsed, long latencyMs) {

Counter.builder("chat.requests.total")

.tag("user_id", userId)

.register(meterRegistry)

.increment();

Timer.builder("chat.request.latency")

.tag("user_id", userId)

.register(meterRegistry)

.record(Duration.ofMillis(latencyMs));

DistributionSummary.builder("chat.tokens.used")

.register(meterRegistry)

.record(tokensUsed);

}

}

八、总结

本文深入讲解了Java大模型多轮会话开发的完整技术方案:

核心要点回顾

1. 上下文管理:根据场景选择合适的策略(滑动窗口/摘要压缩/向量检索)

2. Token控制:精确计算 + 智能截断,避免超出模型限制

3. Redis存储:设计好Key结构、TTL策略,保证会话持久化

4. 实时通信:WebSocket支持长连接,提升用户体验

5. 性能优化:缓存热点会话、控制并发、监控指标

技术选型建议

|

组件 |

推荐方案 |

|

会话存储 |

Redis + Redisson分布式锁 |

|

Token计算 |

tiktoken-jvm(精确) |

|

框架 |

Spring Boot 3.x |

|

实时通信 |

WebSocket |

|

监控 |

Micrometer + Prometheus |

注意事项

1. Token成本:每次请求都要计算Token,避免不必要的浪费

2. 会话安全:敏感信息要加密存储,设置合理的TTL

3. 异常处理:网络超时、模型限流都要有降级方案

4. 数据备份:重要会话要定期持久化到数据库

掌握这些技术点,你就能在Java项目中实现稳定、高效的多轮会话功能了!

---

作者:付雷刚(洛水石)

原创内容,转载需注明出处

更多推荐

已为社区贡献5条内容

已为社区贡献5条内容

所有评论(0)