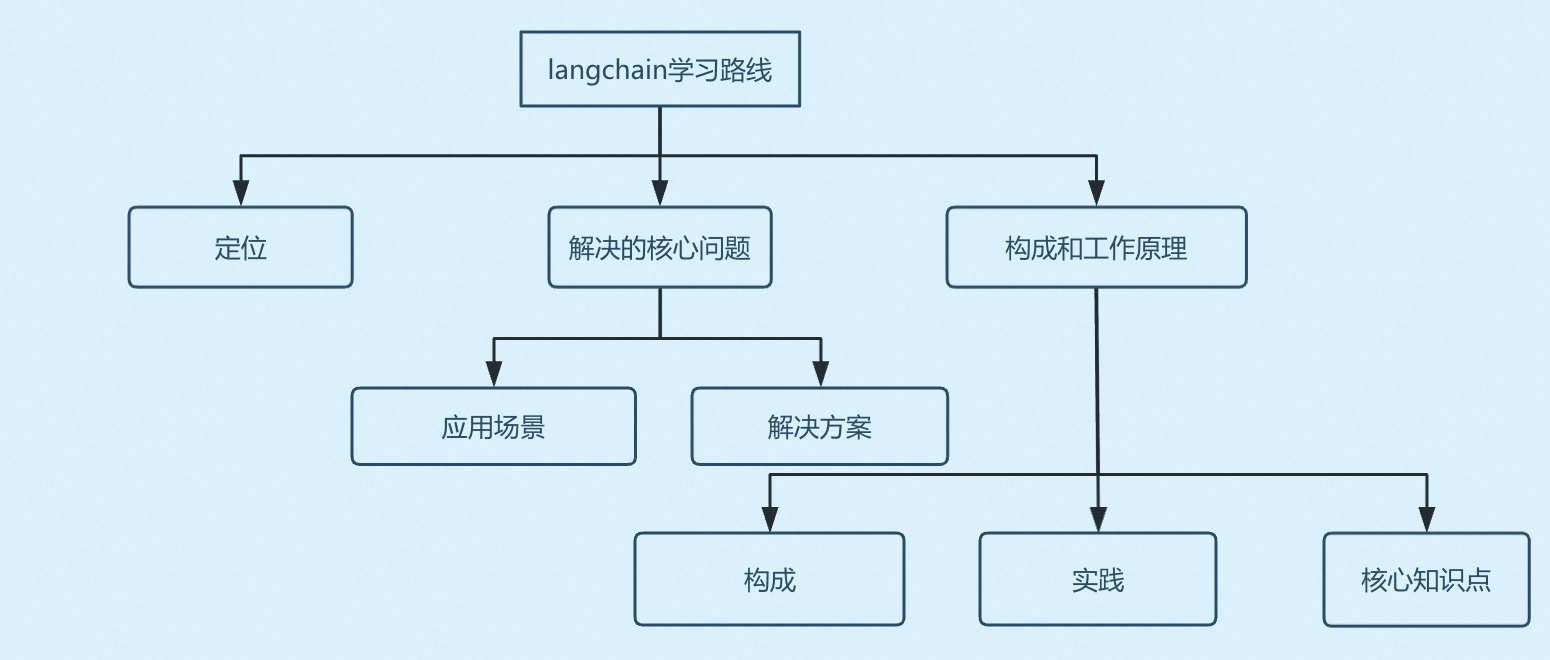

Langchain开源框架设计思想和源码学习

了解Langchain的定位、核心设计思想,框架设计思路、源码级学习其实现过程,包含配置化信息管理、自主决策编排等具体的设计实现学习

Langchain定位

Langchain是一个用于简化AI应用开发,降低学习成本的开源框架,核心设计思想是:将LLM与外部数据、计算能力结合,构建可落地的应用。

解决的核心问题

痛点

开发人员在采用传统的开发方式开发AI应用面临着不仅要充分理解业务还要更多的关注模型的管理、应用上下文管理、工具调用、资源访问,消息状态管理等底层的技术实现,开发成本成本高、维护成本高、扩展能力弱具体如下:

|

痛点维度 |

传统/原生开发方式 |

|

模型适配 |

每个厂商 API 不同(OpenAI vs 百度 vs 本地),代码耦合严重 |

|

上下文管理 |

需手动维护对话历史列表,计算 Token 是否超限 |

|

任务复杂度 |

单个 Prompt 难以完成复杂任务,逻辑硬编码在代码里 |

|

外部交互 |

需手动写函数解析 LLM 输出,再调用 API,易出错 |

|

私有数据 |

需自建向量索引、分块策略、检索逻辑,难度大 |

|

调试运维 |

黑盒调用,难以追踪哪一步 Prompt 出了问题 |

解决方案

如果你是一个架构师,面对这些问题应该如何解决?思考一下,

1. 定位

构建一个标准的开发框架,对AI应用开发所用到的模型调用、上下文存储、工具解析、模型输出解析、资源调用、RAG接入等统一管理,对这些能力进行逐个封装并暴露统一的接口,开发者只需要调用对应的功能函数无需再关注具体的细节实现。

2. AI应用开发功能梳理

Langchain是一个简化AI应用开发的辨准开源框架,要使用Langchain开发一个满足企业级的高质量AI应用必须满足起所用到所有的能力需求。开发一个AI应用可能使用的技术如下:

- LLM模型管理(对接不同厂商的模型、模型I/O)

- 应用上下文管理(应用上下文存储)

- 工具管理(工具声明方式、模型调用工具的方式)

- 参数管理(LLM构建工具调用请求、响应自动注入应用上下文)

- 链式编排(支持复杂任务的编排)

- RAG技术接入

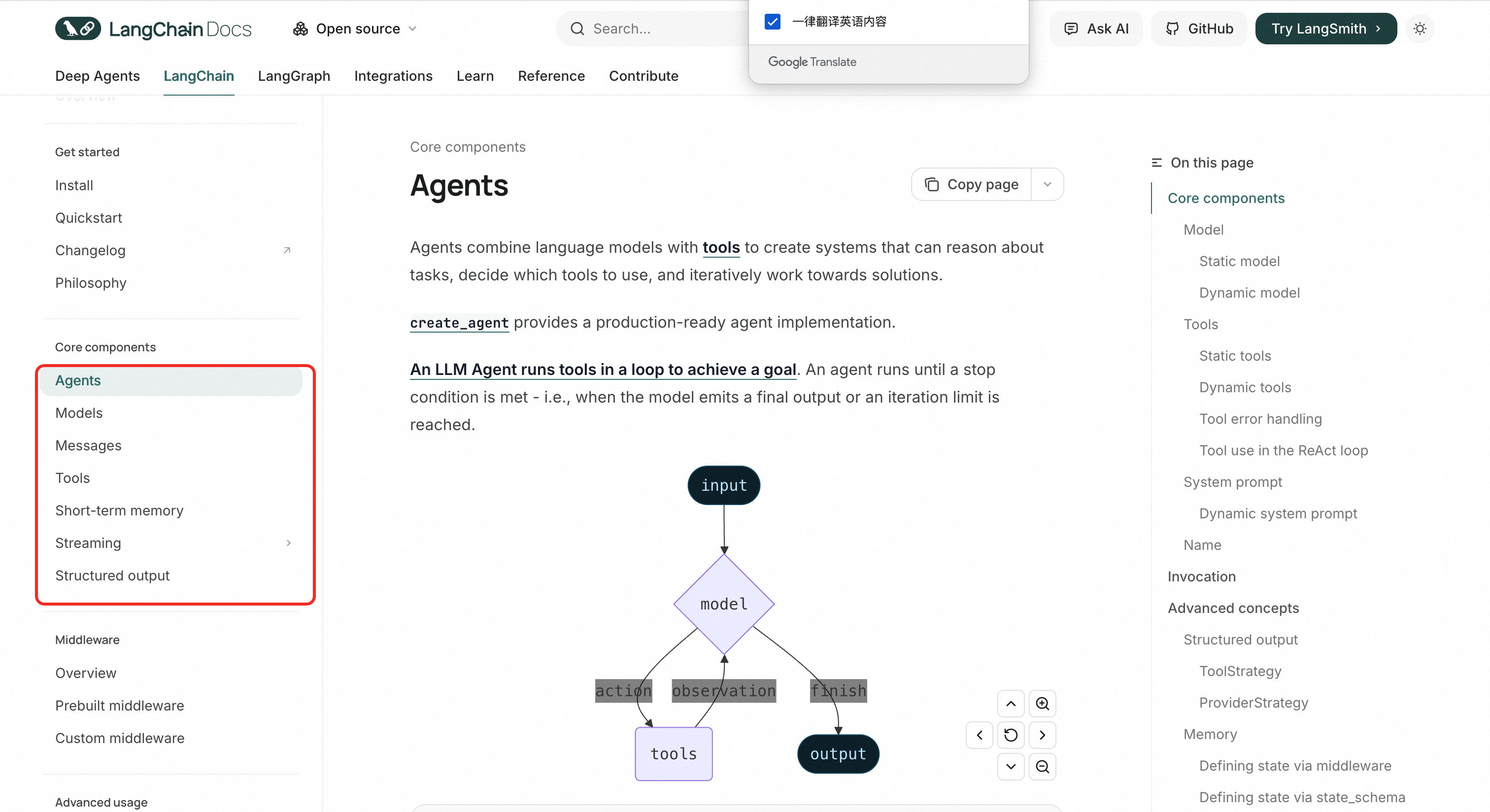

Langchain 官网文档中的核心组件也是按照类似于同样的方式构建的

Langchain的框架设计不仅包含了LLM与外部数据、计算能力的结合还提供了模块化、可组合行、抽象统一接口,方便开发人员任意的组合和使用。

构成&实践

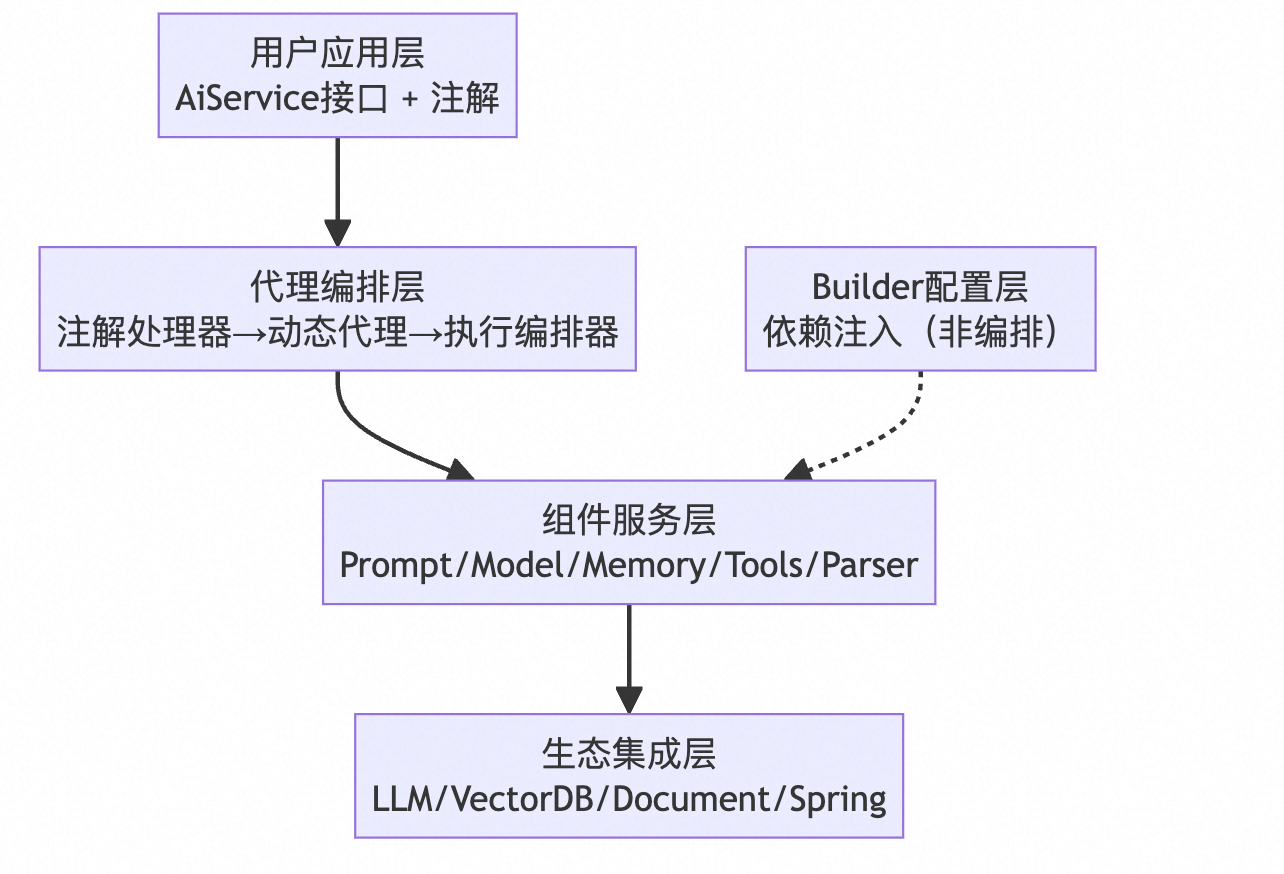

以java版本的Langchain4J为例进行学习

Langchain4J框架架构图

Agent(代理模块)

定位:

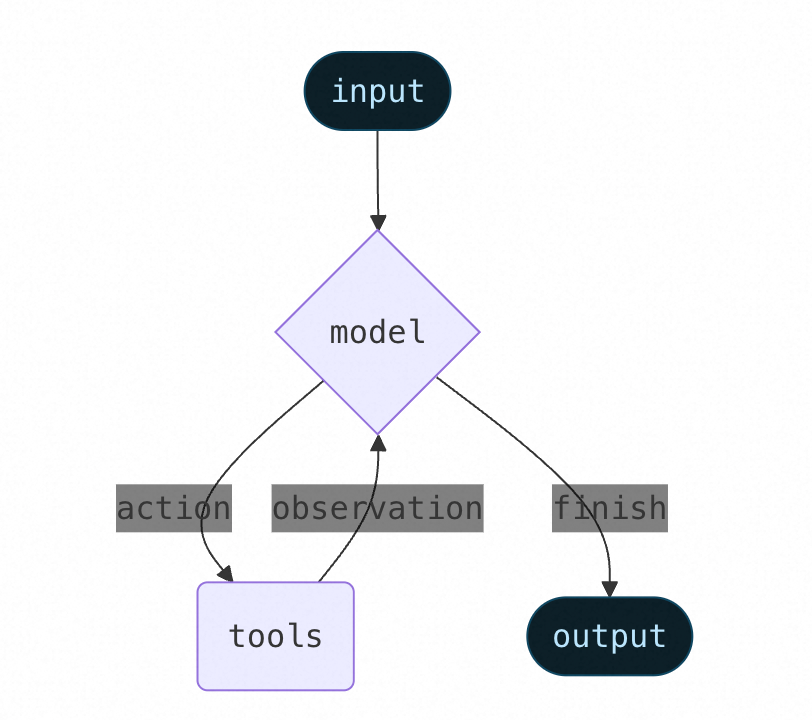

智能体将LLM与工具、RAG等相结合,创建能够对任务进行迭代推理+工具调用并寻求解决方案的系统。

Agent的核心作用是基于大模型的推理能力,自主规划任务流程,并按需调用已注册的工具(Tools)来解决复杂问题。根据Agent的定位可知,Agent需要提供两个核心能力:1.配置化管理能力,2. 编排决策能力。

配置化管理能力:通过接口方式向开发者提供模型、工具、prompt、会话管理、token等的组合能力,主要面向开发过程的构建阶段。

决策编排能力:采用固定编排方式实现并直接封装在框架内部,完成memory、RAG、Message、tools、结果整合、异常处理等功能,其核心是在运行阶段完成模型驱动工具调用的自主决策能力。在langchain4j中,工具调用有两种模式:

- 模型驱动工具调用:模型决定调用哪个工具(通过function calling),此时userMessage是用户原始问题,模型根据userMessage决定调用工具。

- 声明式工具调用:在AiService接口中,通过@Tool注解的方法,框架会到扫描到工具信息并生成对应的元信息息传递给模型,模型返回工具调用参数,然后框架执行工具。

配置化管理能力

python版本由create_agent实现,java版本由AIService实现。主要负责将模型、工具、中间件、配置信息等进行注册和整合

核心动作: 配置、注册、绑定、管理

在这个阶段,像一个“系统架构师”,把各种零件组装成一台完整的机器。此时并没有发生任何 AI 推理或工具调用。

- 注册工具 (Tools): 扫描

@Tool注解,生成ToolSpecification元数据,建立“函数名 -> Java 方法”的映射表。 - 绑定模型 (Model): 注入具体的

ChatLanguageModel实现(如 OpenAI, Ollama)。 - 配置记忆 (Memory/Token): 初始化

ChatMemory策略(如固定窗口、摘要压缩),设定 Token 管理规则。 - 挂载中间件 (Listeners/Filters): 注册事件监听器,用于拦截未来的请求/响应、记录日志、监控 Token。

- 注入 Prompt: 设置默认的

SystemMessage模板。

python版本

构建agent的函数:

create_agent(

model: str | BaseChatModel,

tools: Sequence[BaseTool | Callable[..., Any] | dict[str, Any]] | None = None,

*,

system_prompt: str | SystemMessage | None = None,

middleware: Sequence[AgentMiddleware[StateT_co, ContextT]] = (),

response_format: ResponseFormat[ResponseT] | type[ResponseT] | dict[str, Any] | None = None,

state_schema: type[AgentState[ResponseT]] | None = None,

context_schema: type[ContextT] | None = None,

checkpointer: Checkpointer | None = None,

store: BaseStore | None = None,

interrupt_before: list[str] | None = None,

interrupt_after: list[str] | None = None,

debug: bool = False,

name: str | None = None,

cache: BaseCache[Any] | None = None

) -> CompiledStateGraph[AgentState[ResponseT], ContextT, _InputAgentState, _OutputAgentState[ResponseT]]demo:

from langchain.agents import create_agent

agent = create_agent("openai:gpt-5", tools=tools)java版本

//

// Source code recreated from a .class file by IntelliJ IDEA

// (powered by FernFlower decompiler)

//

package dev.langchain4j.service;

import dev.langchain4j.Internal;

import dev.langchain4j.agent.tool.ToolExecutionRequest;

import dev.langchain4j.agent.tool.ToolSpecification;

import dev.langchain4j.data.message.AiMessage;

import dev.langchain4j.data.message.ChatMessage;

import dev.langchain4j.data.message.ToolExecutionResultMessage;

import dev.langchain4j.guardrail.InputGuardrail;

import dev.langchain4j.guardrail.OutputGuardrail;

import dev.langchain4j.guardrail.config.InputGuardrailsConfig;

import dev.langchain4j.guardrail.config.OutputGuardrailsConfig;

import dev.langchain4j.internal.ValidationUtils;

import dev.langchain4j.memory.ChatMemory;

import dev.langchain4j.memory.chat.ChatMemoryProvider;

import dev.langchain4j.model.chat.ChatModel;

import dev.langchain4j.model.chat.StreamingChatModel;

import dev.langchain4j.model.chat.request.ChatRequest;

import dev.langchain4j.model.moderation.Moderation;

import dev.langchain4j.model.moderation.ModerationModel;

import dev.langchain4j.observability.api.event.AiServiceEvent;

import dev.langchain4j.observability.api.listener.AiServiceListener;

import dev.langchain4j.rag.DefaultRetrievalAugmentor;

import dev.langchain4j.rag.RetrievalAugmentor;

import dev.langchain4j.rag.content.retriever.ContentRetriever;

import dev.langchain4j.service.tool.BeforeToolExecution;

import dev.langchain4j.service.tool.ToolArgumentsErrorHandler;

import dev.langchain4j.service.tool.ToolExecution;

import dev.langchain4j.service.tool.ToolExecutionErrorHandler;

import dev.langchain4j.service.tool.ToolExecutor;

import dev.langchain4j.service.tool.ToolProvider;

import dev.langchain4j.spi.ServiceHelper;

import dev.langchain4j.spi.services.AiServicesFactory;

import java.util.Arrays;

import java.util.Collection;

import java.util.List;

import java.util.Map;

import java.util.Optional;

import java.util.Set;

import java.util.concurrent.ExecutionException;

import java.util.concurrent.Executor;

import java.util.concurrent.Future;

import java.util.function.BiFunction;

import java.util.function.Consumer;

import java.util.function.Function;

import java.util.function.UnaryOperator;

import java.util.stream.Collectors;

public abstract class AiServices<T> {

protected final AiServiceContext context;

private boolean contentRetrieverSet = false;

private boolean retrievalAugmentorSet = false;

protected AiServices(AiServiceContext context) {

this.context = context;

}

public static <T> T create(Class<T> aiService, ChatModel chatModel) {

return builder(aiService).chatModel(chatModel).build();

}

public static <T> T create(Class<T> aiService, StreamingChatModel streamingChatModel) {

return builder(aiService).streamingChatModel(streamingChatModel).build();

}

public static <T> AiServices<T> builder(Class<T> aiService) {

AiServiceContext context = AiServiceContext.create(aiService);

return builder(context);

}

@Internal

public static <T> AiServices<T> builder(AiServiceContext context) {

return (AiServices)(AiServices.FactoryHolder.aiServicesFactory != null ? AiServices.FactoryHolder.aiServicesFactory.create(context) : new DefaultAiServices(context));

}

public AiServices<T> chatModel(ChatModel chatModel) {

this.context.chatModel = chatModel;

return this;

}

public AiServices<T> streamingChatModel(StreamingChatModel streamingChatModel) {

this.context.streamingChatModel = streamingChatModel;

return this;

}

public AiServices<T> systemMessage(String systemMessage) {

return this.systemMessageProvider((ignore) -> {

return systemMessage;

});

}

public AiServices<T> systemMessageProvider(Function<Object, String> systemMessageProvider) {

this.context.systemMessageProvider = systemMessageProvider.andThen(Optional::ofNullable);

return this;

}

public AiServices<T> userMessage(String userMessage) {

return this.userMessageProvider((ignore) -> {

return userMessage;

});

}

public AiServices<T> userMessageProvider(Function<Object, String> userMessageProvider) {

this.context.userMessageProvider = userMessageProvider.andThen(Optional::ofNullable);

return this;

}

public AiServices<T> chatMemory(ChatMemory chatMemory) {

if (chatMemory != null) {

this.context.initChatMemories(chatMemory);

}

return this;

}

public AiServices<T> chatMemoryProvider(ChatMemoryProvider chatMemoryProvider) {

if (chatMemoryProvider != null) {

this.context.initChatMemories(chatMemoryProvider);

}

return this;

}

public AiServices<T> chatRequestTransformer(UnaryOperator<ChatRequest> chatRequestTransformer) {

this.context.chatRequestTransformer = (req, memId) -> {

return (ChatRequest)chatRequestTransformer.apply(req);

};

return this;

}

public AiServices<T> chatRequestTransformer(BiFunction<ChatRequest, Object, ChatRequest> chatRequestTransformer) {

this.context.chatRequestTransformer = chatRequestTransformer;

return this;

}

public AiServices<T> moderationModel(ModerationModel moderationModel) {

this.context.moderationModel = moderationModel;

return this;

}

public AiServices<T> tools(Object... objectsWithTools) {

return this.tools((Collection)Arrays.asList(objectsWithTools));

}

public AiServices<T> tools(Collection<Object> objectsWithTools) {

this.context.toolService.tools(objectsWithTools);

return this;

}

public AiServices<T> toolProvider(ToolProvider toolProvider) {

this.context.toolService.toolProvider(toolProvider);

return this;

}

public AiServices<T> tools(Map<ToolSpecification, ToolExecutor> tools) {

this.context.toolService.tools(tools);

return this;

}

public AiServices<T> tools(Map<ToolSpecification, ToolExecutor> tools, Set<String> immediateReturnToolNames) {

this.context.toolService.tools(tools, immediateReturnToolNames);

return this;

}

public AiServices<T> executeToolsConcurrently() {

this.context.toolService.executeToolsConcurrently();

return this;

}

public AiServices<T> executeToolsConcurrently(Executor executor) {

this.context.toolService.executeToolsConcurrently(executor);

return this;

}

public AiServices<T> maxSequentialToolsInvocations(int maxSequentialToolsInvocations) {

this.context.toolService.maxSequentialToolsInvocations(maxSequentialToolsInvocations);

return this;

}

public AiServices<T> beforeToolExecution(Consumer<BeforeToolExecution> beforeToolExecution) {

this.context.toolService.beforeToolExecution(beforeToolExecution);

return this;

}

public AiServices<T> afterToolExecution(Consumer<ToolExecution> afterToolExecution) {

this.context.toolService.afterToolExecution(afterToolExecution);

return this;

}

public AiServices<T> hallucinatedToolNameStrategy(Function<ToolExecutionRequest, ToolExecutionResultMessage> hallucinatedToolNameStrategy) {

this.context.toolService.hallucinatedToolNameStrategy(hallucinatedToolNameStrategy);

return this;

}

public AiServices<T> toolArgumentsErrorHandler(ToolArgumentsErrorHandler handler) {

this.context.toolService.argumentsErrorHandler(handler);

return this;

}

public AiServices<T> toolExecutionErrorHandler(ToolExecutionErrorHandler handler) {

this.context.toolService.executionErrorHandler(handler);

return this;

}

public AiServices<T> contentRetriever(ContentRetriever contentRetriever) {

if (this.retrievalAugmentorSet) {

throw IllegalConfigurationException.illegalConfiguration("Only one out of [retriever, contentRetriever, retrievalAugmentor] can be set");

} else {

this.contentRetrieverSet = true;

this.context.retrievalAugmentor = DefaultRetrievalAugmentor.builder().contentRetriever((ContentRetriever)ValidationUtils.ensureNotNull(contentRetriever, "contentRetriever")).build();

return this;

}

}

public AiServices<T> retrievalAugmentor(RetrievalAugmentor retrievalAugmentor) {

if (this.contentRetrieverSet) {

throw IllegalConfigurationException.illegalConfiguration("Only one out of [retriever, contentRetriever, retrievalAugmentor] can be set");

} else {

this.retrievalAugmentorSet = true;

this.context.retrievalAugmentor = (RetrievalAugmentor)ValidationUtils.ensureNotNull(retrievalAugmentor, "retrievalAugmentor");

return this;

}

}

public <I extends AiServiceEvent> AiServices<T> registerListener(AiServiceListener<I> listener) {

this.context.eventListenerRegistrar.register((AiServiceListener)ValidationUtils.ensureNotNull(listener, "listener"));

return this;

}

public AiServices<T> registerListeners(AiServiceListener<?>... listeners) {

this.context.eventListenerRegistrar.register(listeners);

return this;

}

public AiServices<T> registerListeners(Collection<? extends AiServiceListener<?>> listeners) {

this.context.eventListenerRegistrar.register(listeners);

return this;

}

public <I extends AiServiceEvent> AiServices<T> unregisterListener(AiServiceListener<I> listener) {

this.context.eventListenerRegistrar.unregister((AiServiceListener)ValidationUtils.ensureNotNull(listener, "listener"));

return this;

}

public AiServices<T> unregisterListeners(AiServiceListener<?>... listeners) {

this.context.eventListenerRegistrar.unregister(listeners);

return this;

}

public AiServices<T> unregisterListeners(Collection<? extends AiServiceListener<?>> listeners) {

this.context.eventListenerRegistrar.unregister(listeners);

return this;

}

public AiServices<T> inputGuardrailsConfig(InputGuardrailsConfig inputGuardrailsConfig) {

this.context.guardrailServiceBuilder.inputGuardrailsConfig(inputGuardrailsConfig);

return this;

}

public AiServices<T> outputGuardrailsConfig(OutputGuardrailsConfig outputGuardrailsConfig) {

this.context.guardrailServiceBuilder.outputGuardrailsConfig(outputGuardrailsConfig);

return this;

}

public <I extends InputGuardrail> AiServices<T> inputGuardrailClasses(List<Class<? extends I>> guardrailClasses) {

this.context.guardrailServiceBuilder.inputGuardrailClasses(guardrailClasses);

return this;

}

public <I extends InputGuardrail> AiServices<T> inputGuardrailClasses(Class<? extends I>... guardrailClasses) {

this.context.guardrailServiceBuilder.inputGuardrailClasses(guardrailClasses);

return this;

}

public <I extends InputGuardrail> AiServices<T> inputGuardrails(List<I> guardrails) {

this.context.guardrailServiceBuilder.inputGuardrails(guardrails);

return this;

}

public <I extends InputGuardrail> AiServices<T> inputGuardrails(I... guardrails) {

this.context.guardrailServiceBuilder.inputGuardrails(guardrails);

return this;

}

public <O extends OutputGuardrail> AiServices<T> outputGuardrailClasses(List<Class<? extends O>> guardrailClasses) {

this.context.guardrailServiceBuilder.outputGuardrailClasses(guardrailClasses);

return this;

}

public <O extends OutputGuardrail> AiServices<T> outputGuardrailClasses(Class<? extends O>... guardrailClasses) {

this.context.guardrailServiceBuilder.outputGuardrailClasses(guardrailClasses);

return this;

}

public <O extends OutputGuardrail> AiServices<T> outputGuardrails(List<O> guardrails) {

this.context.guardrailServiceBuilder.outputGuardrails(guardrails);

return this;

}

public <O extends OutputGuardrail> AiServices<T> outputGuardrails(O... guardrails) {

this.context.guardrailServiceBuilder.outputGuardrails(guardrails);

return this;

}

public AiServices<T> storeRetrievedContentInChatMemory(boolean storeRetrievedContentInChatMemory) {

this.context.storeRetrievedContentInChatMemory = storeRetrievedContentInChatMemory;

return this;

}

public abstract T build();

protected void performBasicValidation() {

if (this.context.chatModel == null && this.context.streamingChatModel == null) {

throw IllegalConfigurationException.illegalConfiguration("Please specify either chatModel or streamingChatModel");

}

}

public static List<ChatMessage> removeToolMessages(List<ChatMessage> messages) {

return (List)messages.stream().filter((it) -> {

return !(it instanceof ToolExecutionResultMessage);

}).filter((it) -> {

return !(it instanceof AiMessage) || !((AiMessage)it).hasToolExecutionRequests();

}).collect(Collectors.toList());

}

public static void verifyModerationIfNeeded(Future<Moderation> moderationFuture) {

if (moderationFuture != null) {

try {

Moderation moderation = (Moderation)moderationFuture.get();

if (moderation.flagged()) {

throw new ModerationException(String.format("Text \"%s\" violates content policy", moderation.flaggedText()), moderation);

}

} catch (ExecutionException | InterruptedException var2) {

Exception e = var2;

throw new RuntimeException(e);

}

}

}

private static class FactoryHolder {

private static final AiServicesFactory aiServicesFactory = (AiServicesFactory)ServiceHelper.loadFactory(AiServicesFactory.class);

private FactoryHolder() {

}

}

}

demo

// 在主程序中进行Agent注册

WeatherAssistant assistant = AiServices.builder(WeatherAssistant.class)

.chatModel(chatModel)

.chatMemory(MessageWindowChatMemory.withMaxMessages(20))

.tools(weatherService) // 天气工具

.build();

自主决策编排能力

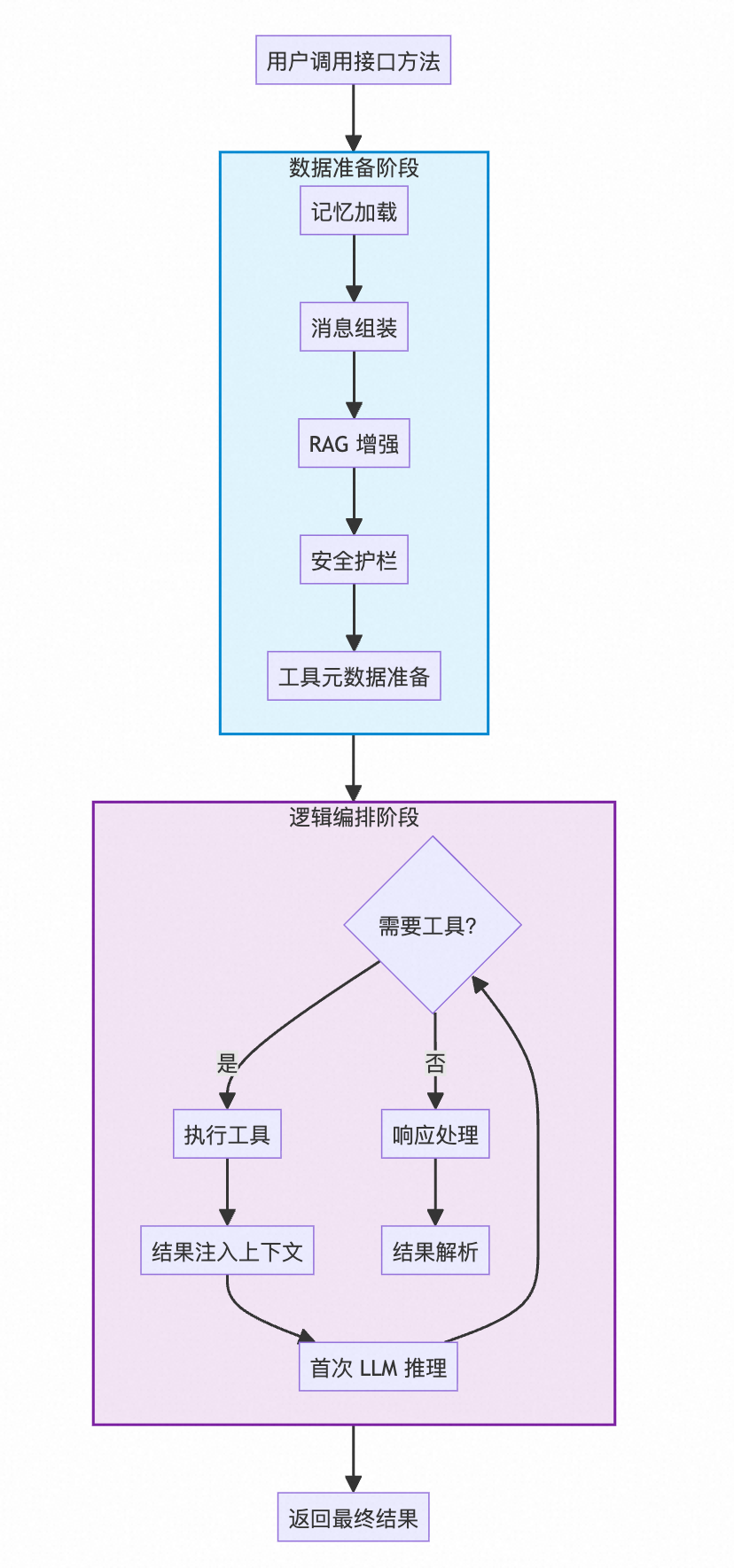

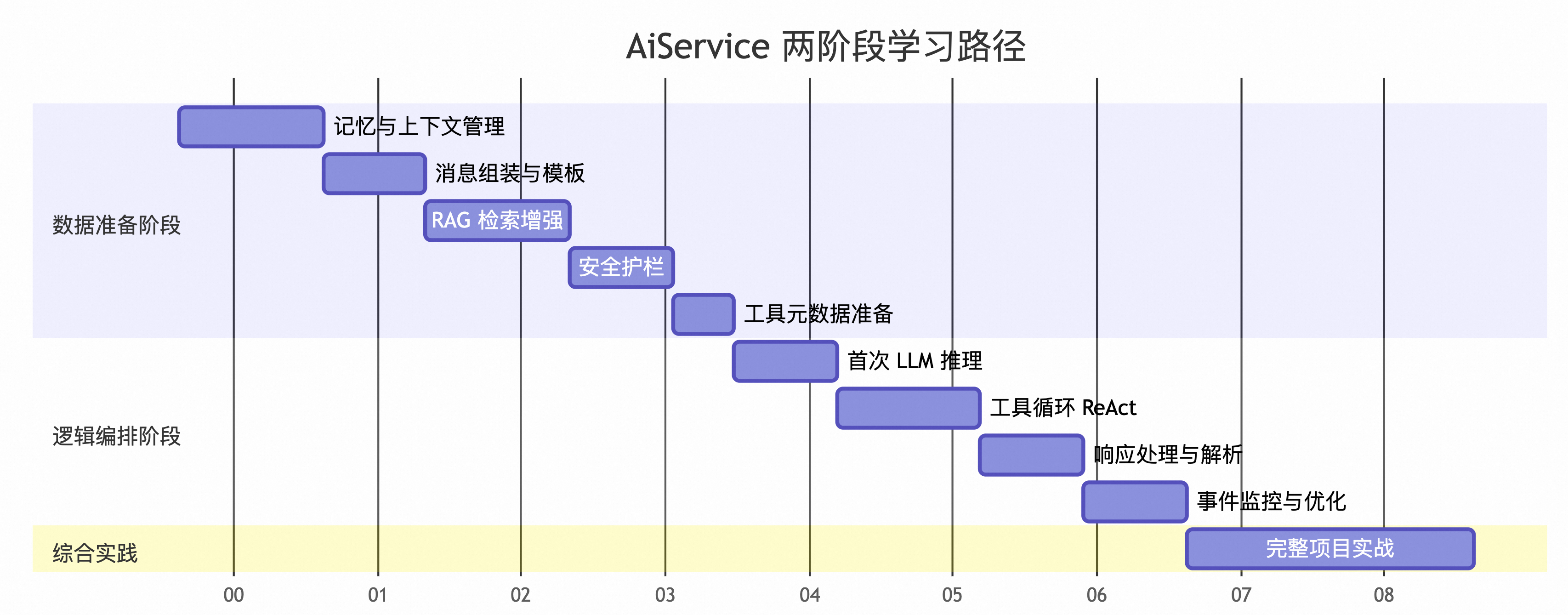

按照认知路线:自主决策能力的实现分为两个步骤:1.数据准备阶段;2.逻辑编排阶段。

开发人员在通过AiServices的builder构建过程中将需要用到的模型,应用上下文、工具、参数、执行逻辑等信息进行组合后在build()时通过创建一个动态代理完成数据的注册和自主逻辑编排。

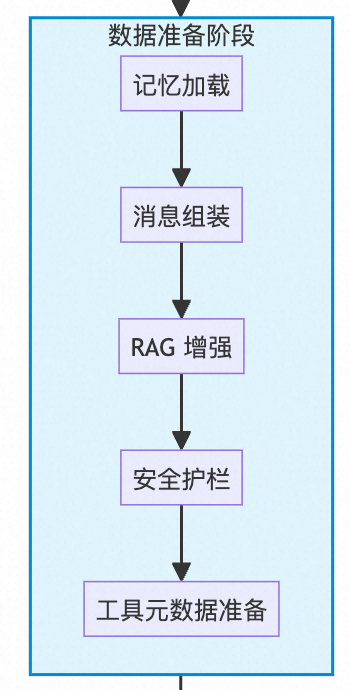

数据准备阶段

核心目标:为LLM推理准备好所有必要的输入数据和上下文环境。

源码范围:invoke() 方法开始 → ChatExecutor.execute() 调用前,包含安全&参数&格式校验、Memory、Message、RAG、tools元数据的准备。

根据源码按照准备流程进行介绍和解读

- 记忆上下文管理

-

- 获取当前会话的memoryId

- 加载历史会话信息并用变量存储方便后面使用

public Object invoke(Method method, Object[] args, InvocationContext invocationContext) {

Object memoryId = invocationContext.chatMemoryId();

ChatMemory chatMemory = context.hasChatMemory()

? context.chatMemoryService.getOrCreateChatMemory(memoryId)

: null;

.....

}- Message加载

-

- 根据声明解析并加载系统消息

- 获取用户消息模板

- 获取动态参数并将动态参数注入到用户消息模板中,组合成LLM可以理解的用户消息

public Object invoke(Method method, Object[] args, InvocationContext invocationContext) {

......

//根据声明解析并加载系统消息

Optional<SystemMessage> systemMessage = prepareSystemMessage(memoryId, method, args);

// 获取用户消息模板

var userMessageTemplate = getUserMessageTemplate(memoryId, method, args);

//获取动态参数并将动态参数注入到用户消息模板中,组合成LLM可以理解的用户消息

var variables = InternalReflectionVariableResolver.findTemplateVariables(

userMessageTemplate, method, args);

UserMessage originalUserMessage =

prepareUserMessage(method, args, userMessageTemplate, variables);

context.eventListenerRegistrar.fireEvent(AiServiceStartedEvent.builder()

.invocationContext(invocationContext)

.systemMessage(systemMessage)

.userMessage(originalUserMessage)

.build());

UserMessage userMessageForAugmentation = originalUserMessage;

AugmentationResult augmentationResult = null;

.....

}- RAG增强

// 如果使用了RAG则需要使用RAG增强用户输入消息

if (context.retrievalAugmentor != null) {

List<ChatMessage> chatMemoryMessages = chatMemory != null ? chatMemory.messages() : null;

Metadata metadata = Metadata.builder()

.chatMessage(userMessageForAugmentation)

.systemMessage(systemMessage.orElse(null))

.chatMemory(chatMemoryMessages)

.invocationContext(invocationContext)

.build();

AugmentationRequest augmentationRequest =

new AugmentationRequest(userMessageForAugmentation, metadata);

augmentationResult = context.retrievalAugmentor.augment(augmentationRequest);

userMessageForAugmentation = (UserMessage) augmentationResult.chatMessage();

}- 安全防护

// 对即将注入到LLM中的用户信息进行一次安全过滤

var commonGuardrailParam = GuardrailRequestParams.builder()

.chatMemory(chatMemory)

.augmentationResult(augmentationResult)

.userMessageTemplate(userMessageTemplate)

.invocationContext(invocationContext)

.aiServiceListenerRegistrar(context.eventListenerRegistrar)

.variables(variables)

.build();

UserMessage userMessage = invokeInputGuardrails(

context.guardrailService(), method, userMessageForAugmentation, commonGuardrailParam);

- 多模态处理

-

- 加载多模态输入内容

- 将多模态内容和输入的文本内容进行合并处理

Optional<List<Content>> maybeContents = findContents(method, args);

if (maybeContents.isPresent()) {

List<Content> allContents = new ArrayList<>();

for (Content content : maybeContents.get()) {

if (content == null) { // placeholder

allContents.addAll(userMessage.contents());

} else {

allContents.add(content);

}

}

userMessage = userMessage.toBuilder().contents(allContents).build();

}

List<ChatMessage> messages = new ArrayList<>();

if (context.hasChatMemory()) {

systemMessage.ifPresent(chatMemory::add);

messages.addAll(chatMemory.messages());

if (context.storeRetrievedContentInChatMemory) {

chatMemory.add(userMessage);

} else {

chatMemory.add(originalUserMessage);

}

messages.add(userMessage);

} else {

systemMessage.ifPresent(messages::add);

messages.add(userMessage);

}- 加载历史信息

List<ChatMessage> messages = new ArrayList<>();

if (context.hasChatMemory()) {

systemMessage.ifPresent(chatMemory::add);

messages.addAll(chatMemory.messages());

if (context.storeRetrievedContentInChatMemory) {

chatMemory.add(userMessage);

} else {

chatMemory.add(originalUserMessage);

}

messages.add(userMessage);

} else {

systemMessage.ifPresent(messages::add);

messages.add(userMessage);

}- 准备工具元信息

ToolServiceContext toolServiceContext =

context.toolService.createContext(invocationContext, userMessage);源码函数

Object proxyInstance = Proxy.newProxyInstance(

context.aiServiceClass.getClassLoader(),

new Class<?>[] {context.aiServiceClass},

new InvocationHandler() {

//主要在创建代理后做的前置准备工作

@Override

public Object invoke(Object proxy, Method method, Object[] args) throws Throwable {

if (method.isDefault()) {

return InvocationHandler.invokeDefault(proxy, method, args);

}

if (method.getDeclaringClass() == Object.class) {

switch (method.getName()) {

case "equals":

return proxy == args[0];

case "hashCode":

return System.identityHashCode(proxy);

case "toString":

return context.aiServiceClass.getName() + "@"

+ Integer.toHexString(System.identityHashCode(proxy));

default:

throw new IllegalStateException("Unexpected Object method: " + method);

}

}

//上下文管理入口

if (method.getDeclaringClass() == ChatMemoryAccess.class) {

return handleChatMemoryAccess(method, args);

}

// 注解参数内容校验

validateParameters(context.aiServiceClass, method);

//使用应用上下文的方式管理配置组合传入的数据为后续数据准备阶段提供可用参数

InvocationParameters invocationParameters = findArgumentOfType(

InvocationParameters.class, args, method.getParameters())

.orElseGet(InvocationParameters::new);

InvocationContext invocationContext = InvocationContext.builder()

.invocationId(UUID.randomUUID())

.interfaceName(context.aiServiceClass.getName())

.methodName(method.getName())

.methodArguments(args != null ? Arrays.asList(args) : List.of())

.chatMemoryId(findMemoryId(method, args).orElse(ChatMemoryService.DEFAULT))

.invocationParameters(invocationParameters)

.managedParameters(LangChain4jManaged.current())

.timestampNow()

.build();

try {

return invoke(method, args, invocationContext);

} catch (Exception ex) {

context.eventListenerRegistrar.fireEvent(AiServiceErrorEvent.builder()

.invocationContext(invocationContext)

.error(ex)

.build());

throw ex;

}

}

//核心数据准备阶段

public Object invoke(Method method, Object[] args, InvocationContext invocationContext) {

Object memoryId = invocationContext.chatMemoryId();

ChatMemory chatMemory = context.hasChatMemory()

? context.chatMemoryService.getOrCreateChatMemory(memoryId)

: null;

Optional<SystemMessage> systemMessage = prepareSystemMessage(memoryId, method, args);

var userMessageTemplate = getUserMessageTemplate(memoryId, method, args);

var variables = InternalReflectionVariableResolver.findTemplateVariables(

userMessageTemplate, method, args);

UserMessage originalUserMessage =

prepareUserMessage(method, args, userMessageTemplate, variables);

context.eventListenerRegistrar.fireEvent(AiServiceStartedEvent.builder()

.invocationContext(invocationContext)

.systemMessage(systemMessage)

.userMessage(originalUserMessage)

.build());

UserMessage userMessageForAugmentation = originalUserMessage;

AugmentationResult augmentationResult = null;

if (context.retrievalAugmentor != null) {

List<ChatMessage> chatMemoryMessages = chatMemory != null ? chatMemory.messages() : null;

Metadata metadata = Metadata.builder()

.chatMessage(userMessageForAugmentation)

.systemMessage(systemMessage.orElse(null))

.chatMemory(chatMemoryMessages)

.invocationContext(invocationContext)

.build();

AugmentationRequest augmentationRequest =

new AugmentationRequest(userMessageForAugmentation, metadata);

augmentationResult = context.retrievalAugmentor.augment(augmentationRequest);

userMessageForAugmentation = (UserMessage) augmentationResult.chatMessage();

}

var commonGuardrailParam = GuardrailRequestParams.builder()

.chatMemory(chatMemory)

.augmentationResult(augmentationResult)

.userMessageTemplate(userMessageTemplate)

.invocationContext(invocationContext)

.aiServiceListenerRegistrar(context.eventListenerRegistrar)

.variables(variables)

.build();

UserMessage userMessage = invokeInputGuardrails(

context.guardrailService(), method, userMessageForAugmentation, commonGuardrailParam);

Type returnType = context.returnType != null ? context.returnType : method.getGenericReturnType();

boolean streaming = returnType == TokenStream.class || canAdaptTokenStreamTo(returnType);

// 支持tools调用的模型能力校验(可以理解为生成json格式请求的模型能力校验)

boolean supportsJsonSchema = supportsJsonSchema();

Optional<JsonSchema> jsonSchema = Optional.empty();

//多模态输出校验

boolean returnsImage = isImage(returnType);

if (supportsJsonSchema && !streaming && !returnsImage) {

jsonSchema = serviceOutputParser.jsonSchema(returnType);

}

if ((!supportsJsonSchema || jsonSchema.isEmpty()) && !streaming && !returnsImage) {

userMessage = appendOutputFormatInstructions(returnType, userMessage);

}

Optional<List<Content>> maybeContents = findContents(method, args);

if (maybeContents.isPresent()) {

List<Content> allContents = new ArrayList<>();

for (Content content : maybeContents.get()) {

if (content == null) { // placeholder

allContents.addAll(userMessage.contents());

} else {

allContents.add(content);

}

}

userMessage = userMessage.toBuilder().contents(allContents).build();

}

List<ChatMessage> messages = new ArrayList<>();

if (context.hasChatMemory()) {

systemMessage.ifPresent(chatMemory::add);

messages.addAll(chatMemory.messages());

if (context.storeRetrievedContentInChatMemory) {

chatMemory.add(userMessage);

} else {

chatMemory.add(originalUserMessage);

}

messages.add(userMessage);

} else {

systemMessage.ifPresent(messages::add);

messages.add(userMessage);

}

Future<Moderation> moderationFuture = triggerModerationIfNeeded(method, messages);

ToolServiceContext toolServiceContext =

context.toolService.createContext(invocationContext, userMessage);

if (streaming) {

var tokenStreamParameters = AiServiceTokenStreamParameters.builder()

.messages(messages)

.toolSpecifications(toolServiceContext.toolSpecifications())

.toolExecutors(toolServiceContext.toolExecutors())

.toolArgumentsErrorHandler(context.toolService.argumentsErrorHandler())

.toolExecutionErrorHandler(context.toolService.executionErrorHandler())

.toolExecutor(context.toolService.executor())

.retrievedContents(

augmentationResult != null ? augmentationResult.contents() : null)

.context(context)

.invocationContext(invocationContext)

.commonGuardrailParams(commonGuardrailParam)

.methodKey(method)

.build();

TokenStream tokenStream = new AiServiceTokenStream(tokenStreamParameters);

// TODO moderation

if (returnType == TokenStream.class) {

return tokenStream;

} else {

return adapt(tokenStream, returnType);

}

}

ResponseFormat responseFormat = null;

if (supportsJsonSchema && jsonSchema.isPresent()) {

responseFormat = ResponseFormat.builder()

.type(JSON)

.jsonSchema(jsonSchema.get())

.build();

}

ChatRequestParameters parameters =

chatRequestParameters(method, args, toolServiceContext, responseFormat);

ChatRequest chatRequest = context.chatRequestTransformer.apply(

ChatRequest.builder()

.messages(messages)

.parameters(parameters)

.build(),

memoryId);

......

ChatExecutor chatExecutor = ChatExecutor.builder(context.chatModel)

.chatRequest(chatRequest)

.invocationContext(invocationContext)

.eventListenerRegistrar(context.eventListenerRegistrar)

.build();

/***分界线=================================================*****/

ChatResponse chatResponse = chatExecutor.execute();

context.eventListenerRegistrar.fireEvent(AiServiceResponseReceivedEvent.builder()

.invocationContext(invocationContext)

.response(chatResponse)

.request(chatRequest)

.build());

verifyModerationIfNeeded(moderationFuture);

boolean isReturnTypeResult = typeHasRawClass(returnType, Result.class);

ToolServiceResult toolServiceResult = context.toolService.executeInferenceAndToolsLoop(

context,

memoryId,

chatResponse,

parameters,

messages,

chatMemory,

invocationContext,

toolServiceContext,

isReturnTypeResult);

if (toolServiceResult.immediateToolReturn() && isReturnTypeResult) {

var result = Result.builder()

.content(null)

.tokenUsage(toolServiceResult.aggregateTokenUsage())

.sources(augmentationResult == null ? null : augmentationResult.contents())

.finishReason(TOOL_EXECUTION)

.toolExecutions(toolServiceResult.toolExecutions())

.intermediateResponses(toolServiceResult.intermediateResponses())

.finalResponse(toolServiceResult.finalResponse())

.build();

return fireEventAndReturn(invocationContext, result);

}

ChatResponse aggregateResponse = toolServiceResult.aggregateResponse();

var response = invokeOutputGuardrails(

context.guardrailService(),

method,

aggregateResponse,

chatExecutor,

commonGuardrailParam);

if (response != null) {

if (returnsImage && response instanceof ChatResponse cResponse) {

return fireEventAndReturn(invocationContext, parseImages(cResponse, returnType));

}

if (typeHasRawClass(returnType, response.getClass())) {

return fireEventAndReturn(invocationContext, response);

}

}

var parsedResponse = serviceOutputParser.parse((ChatResponse) response, returnType);

var actualResponse = (isReturnTypeResult)

? Result.builder()

.content(parsedResponse)

.tokenUsage(toolServiceResult.aggregateTokenUsage())

.sources(augmentationResult == null ? null : augmentationResult.contents())

.finishReason(toolServiceResult

.finalResponse()

.finishReason())

.toolExecutions(toolServiceResult.toolExecutions())

.intermediateResponses(toolServiceResult.intermediateResponses())

.finalResponse(toolServiceResult.finalResponse())

.build()

: parsedResponse;

return fireEventAndReturn(invocationContext, actualResponse);

}

private Object fireEventAndReturn(InvocationContext invocationContext, Object result) {

context.eventListenerRegistrar.fireEvent(AiServiceCompletedEvent.builder()

.invocationContext(invocationContext)

.result(result)

.build());

return result;

}

private static boolean isImage(Type returnType) {

Class<?> rawReturnType = getRawClass(returnType);

if (isImageType(rawReturnType)) {

return true;

}

if (Collection.class.isAssignableFrom(rawReturnType)) {

Class<?> genericParam = resolveFirstGenericParameterClass(returnType);

return genericParam != null && isImageType(genericParam);

}

return false;

}

private static Object parseImages(ChatResponse response, Type returnType) {

List<Image> images = response.aiMessage().images();

Class<?> rawReturnType = getRawClass(returnType);

if (isImage(rawReturnType)) {

if (rawReturnType == ImageContent.class) {

List<ImageContent> imageContents = toImageContents(images);

return imageContents.isEmpty() ? null : imageContents.get(0);

}

if (rawReturnType == Image.class) {

return images.isEmpty() ? null : images.get(0);

}

}

if (Collection.class.isAssignableFrom(rawReturnType)) {

Class<?> genericParam = resolveFirstGenericParameterClass(returnType);

if (genericParam == ImageContent.class) {

return toImageContents(images);

}

if (genericParam == Image.class) {

return images;

}

}

throw new UnsupportedOperationException("Unsupported return type " + rawReturnType);

}

private static List<ImageContent> toImageContents(List<Image> images) {

return images.stream().map(ImageContent::from).toList();

}

private boolean canAdaptTokenStreamTo(Type returnType) {

for (TokenStreamAdapter tokenStreamAdapter : tokenStreamAdapters) {

if (tokenStreamAdapter.canAdaptTokenStreamTo(returnType)) {

return true;

}

}

return false;

}

private Object adapt(TokenStream tokenStream, Type returnType) {

for (TokenStreamAdapter tokenStreamAdapter : tokenStreamAdapters) {

if (tokenStreamAdapter.canAdaptTokenStreamTo(returnType)) {

return tokenStreamAdapter.adapt(tokenStream);

}

}

throw new IllegalStateException("Can't find suitable TokenStreamAdapter");

}

private boolean supportsJsonSchema() {

return context.chatModel != null

&& context.chatModel.supportedCapabilities().contains(RESPONSE_FORMAT_JSON_SCHEMA);

}

private UserMessage appendOutputFormatInstructions(Type returnType, UserMessage userMessage) {

String outputFormatInstructions = serviceOutputParser.outputFormatInstructions(returnType);

if (isNullOrEmpty(outputFormatInstructions)) {

return userMessage;

}

List<Content> contents = new ArrayList<>(userMessage.contents());

boolean appended = false;

for (int i = contents.size() - 1; i >= 0; i--) {

if (contents.get(i) instanceof TextContent lastTextContent) {

String newText = lastTextContent.text() + outputFormatInstructions;

contents.set(i, TextContent.from(newText));

appended = true;

break;

}

}

if (!appended) {

contents.add(TextContent.from(outputFormatInstructions));

}

return userMessage.toBuilder().contents(contents).build();

}

private Future<Moderation> triggerModerationIfNeeded(Method method, List<ChatMessage> messages) {

if (method.isAnnotationPresent(Moderate.class)) {

ExecutorService executor = DefaultExecutorProvider.getDefaultExecutorService();

return executor.submit(() -> {

List<ChatMessage> messagesToModerate = removeToolMessages(messages);

return context.moderationModel

.moderate(messagesToModerate)

.content();

});

}

return null;

}

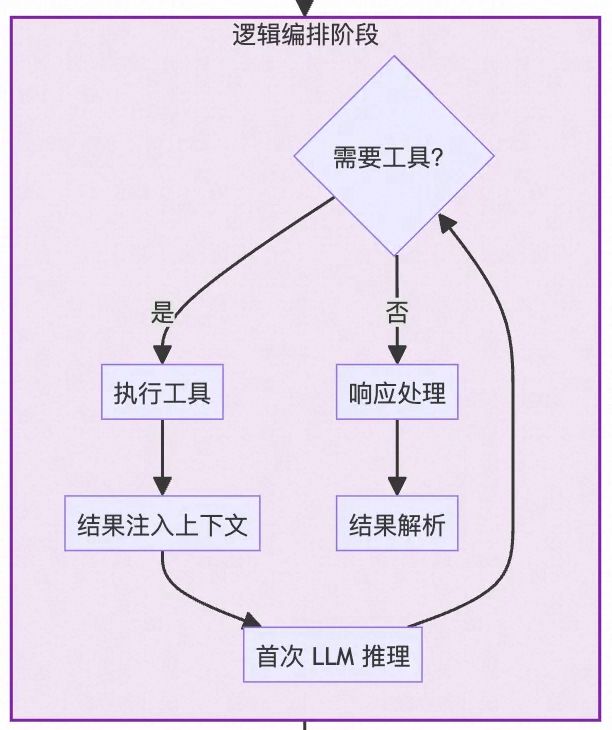

});逻辑编排阶段

Agent的自主决策的能力是由模型驱动的,在首次调用LLM时将应用上下文、message、tools元信息一起发送给LLM,由LLM决定当前是否要调用tools执行外部工具,如果需要则会构建一个结构化输出用于请求外部的工具。因此其逻辑编排分为三个步骤进行:

- 解析LLM的Response,获取工具调用请求;

- 根据拿到的工具调用请求信息后对工具列表进行筛选获取可以通过function calling方式调用的工具集列表,并构建工具调用的请求。

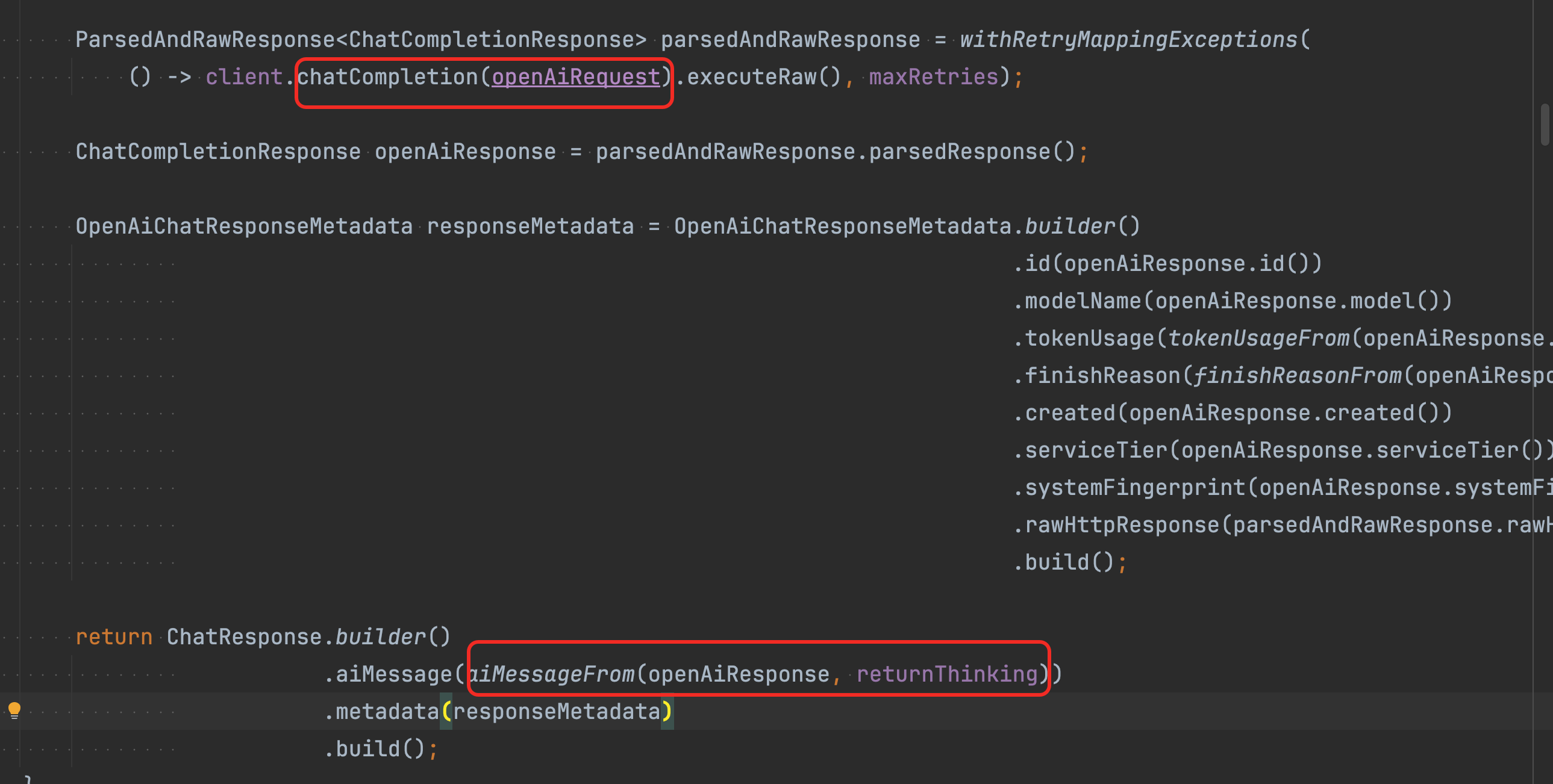

public static AiMessage aiMessageFrom(ChatCompletionResponse response, boolean returnThinking) {

AssistantMessage assistantMessage = response.choices().get(0).message();

String refusal = assistantMessage.refusal();

if (isNotNullOrBlank(refusal)) {

throw new ContentFilteredException(refusal);

}

String content = assistantMessage.content();

String reasoningContent = null;

if (returnThinking) {

reasoningContent = assistantMessage.reasoningContent();

}

List<ToolExecutionRequest> toolExecutionRequests =

getOrDefault(assistantMessage.toolCalls(), List.of()).stream()

.filter(toolCall -> toolCall.type() == FUNCTION)

.map(OpenAiUtils::toToolExecutionRequest)

.collect(toList());

// 兼容旧逻辑

FunctionCall functionCall = assistantMessage.functionCall();

if (functionCall != null) {

ToolExecutionRequest toolExecutionRequest = ToolExecutionRequest.builder()

.name(functionCall.name())

.arguments(functionCall.arguments())

.build();

toolExecutionRequests.add(toolExecutionRequest);

}

return AiMessage.builder()

.text(isNullOrEmpty(content) ? null : content)

.thinking(isNullOrEmpty(reasoningContent) ? null : reasoningContent)

.toolExecutionRequests(toolExecutionRequests)

.build();

}- 根据最终的chatResponse执行工具的循环调用,并将执行结果+上下文信息一起注入到模型中获取最终答案

public ToolServiceResult executeInferenceAndToolsLoop(

AiServiceContext context,

Object memoryId,

ChatResponse chatResponse,

ChatRequestParameters parameters,

List<ChatMessage> messages,

ChatMemory chatMemory,

InvocationContext invocationContext,

ToolServiceContext toolServiceContext,

boolean isReturnTypeResult) {

TokenUsage aggregateTokenUsage = chatResponse.metadata().tokenUsage();

List<ToolExecution> toolExecutions = new ArrayList<>();

List<ChatResponse> intermediateResponses = new ArrayList<>();

int sequentialToolsInvocationsLeft = maxSequentialToolsInvocations;

while (true) {

if (sequentialToolsInvocationsLeft-- == 0) {

throw runtime(

"Something is wrong, exceeded %s sequential tool invocations", maxSequentialToolsInvocations);

}

AiMessage aiMessage = chatResponse.aiMessage();

if (chatMemory != null) {

chatMemory.add(aiMessage);

} else {

messages = new ArrayList<>(messages);

messages.add(aiMessage);

}

if (!aiMessage.hasToolExecutionRequests()) {

break;

}

intermediateResponses.add(chatResponse);

Map<ToolExecutionRequest, ToolExecutionResult> toolResults =

execute(aiMessage.toolExecutionRequests(), toolServiceContext.toolExecutors(), invocationContext);

boolean immediateToolReturn = true;

for (Map.Entry<ToolExecutionRequest, ToolExecutionResult> entry : toolResults.entrySet()) {

ToolExecutionRequest request = entry.getKey();

ToolExecutionResult result = entry.getValue();

ToolExecutionResultMessage resultMessage = ToolExecutionResultMessage.builder()

.id(request.id())

.toolName(request.name())

.text(result.resultText())

.isError(result.isError())

.build();

ToolExecution toolExecution =

ToolExecution.builder().request(request).result(result).build();

toolExecutions.add(toolExecution);

fireToolExecutedEvent(invocationContext, request, toolExecution, context.eventListenerRegistrar);

if (chatMemory != null) {

chatMemory.add(resultMessage);

} else {

messages.add(resultMessage);

}

if (immediateToolReturn) {

if (toolServiceContext.immediateReturnTools().contains(request.name())) {

if (!isReturnTypeResult) {

throw illegalConfiguration(

"Tool '%s' with immediate return is not allowed on a AI service not returning Result.",

request.name());

}

} else {

immediateToolReturn = false;

}

}

}

if (immediateToolReturn) {

ChatResponse finalResponse = intermediateResponses.remove(intermediateResponses.size() - 1);

return ToolServiceResult.builder()

.intermediateResponses(intermediateResponses)

.finalResponse(finalResponse)

.toolExecutions(toolExecutions)

.aggregateTokenUsage(aggregateTokenUsage)

.immediateToolReturn(true)

.build();

}

if (chatMemory != null) {

messages = chatMemory.messages();

}

ChatRequest chatRequest = context.chatRequestTransformer.apply(

ChatRequest.builder()

.messages(messages)

.parameters(parameters)

.build(),

memoryId);

fireRequestIssuedEvent(chatRequest, invocationContext, context.eventListenerRegistrar);

chatResponse = context.chatModel.chat(chatRequest);

fireResponseReceivedEvent(chatRequest, chatResponse, invocationContext, context.eventListenerRegistrar);

aggregateTokenUsage =

TokenUsage.sum(aggregateTokenUsage, chatResponse.metadata().tokenUsage());

}

return ToolServiceResult.builder()

.intermediateResponses(intermediateResponses)

.finalResponse(chatResponse)

.toolExecutions(toolExecutions)

.aggregateTokenUsage(aggregateTokenUsage)

.build();

}

Models、Messages、Tools、short-term memary、Streaming、Structured Output 学习梳理中

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)