【AI coding 智能体设计系列-08】迷你CLI:从伪代码到最小可运行骨架

本文介绍了一个迷你版AI coding CLI的设计骨架,基于阿里云开发者技术号发布的《AI coding 智能体设计》核心思想。该设计通过两条输入规则(/命令和@路径)实现基本交互,提供三个只读工具(read_file、glob、grep)用于文件操作,并实现了tool-calling闭环机制:模型提出工具调用→系统执行→回填结果→继续推理。文中给出了Python实现骨架,包含路径安全校验、输出

·

声明:本文为学习笔记与工程化延伸,核心脉络来自阿里云开发者技术号发布的《AI coding 智能体设计》,在此基础上给出一个“迷你版 AI coding CLI”骨架用于理解原理(不是生产级实现);如有出入,以原文与官方文档为准。原文链接见文末参考。

如果你想真正理解 AI coding 智能体为什么“能做事”,最好的方式不是再看 10 篇概念文,而是亲手做一个“玩具”:

- 支持

/命令(清空/压缩/帮助等) - 支持

@路径(把文件作为上下文喂给模型) - 支持工具注册与工具调用闭环(tool-calling loop)

- 有最小护栏(权限/超时/输出裁剪/可观测)

这篇就给你一个最小可运行骨架(填上你的 OpenAI 兼容接口即可跑)。

01|先定输入协议:两条规则就够

- 输入以

/开头:当作命令(不是自然语言) - 输入包含

@path:在发给模型前先展开,把文件内容拼到上下文

我们先不追求完美解析,先让闭环跑起来。

02|最小工具集:先只做 3 个只读工具

强烈建议从只读开始(安全、可控、收益大):

read_file(path):读取文件(限制在项目目录、限制大小)glob(pattern):按模式找文件grep(pattern, path):在文件内搜索(限制输出行数)

写操作、Shell 执行等,等闭环稳定后再加。

03|核心:tool-calling loop(闭环)

闭环的本质就一句话:

模型提出工具调用意图 → 系统执行 → 把输出回填 → 模型继续推理。

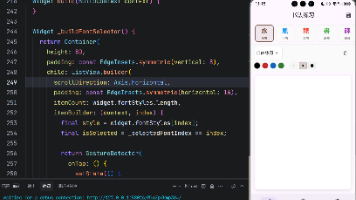

下面这份 Python 骨架用 OpenAI 兼容的 tool-calling 写法示意(你可以替换为任意兼容实现)。

注意:这是“教学版最小骨架”,省略了很多工程细节;生产化需要更严格的权限与审计。

import json

import os

import re

from pathlib import Path

from typing import Any, Dict, List, Tuple

# 你可以替换为任意 OpenAI 兼容 SDK

try:

from openai import OpenAI

except Exception:

OpenAI = None # 仅用于展示骨架

PROJECT_ROOT = Path(os.getcwd()).resolve()

MAX_FILE_BYTES = 64 * 1024 # 64KB,教学用

MAX_TOOL_OUTPUT_CHARS = 4000

def clamp_text(s: str, limit: int = MAX_TOOL_OUTPUT_CHARS) -> str:

if len(s) <= limit:

return s

return s[:limit] + "\n...[TRUNCATED]..."

def safe_resolve(path_str: str) -> Path:

p = (PROJECT_ROOT / path_str).resolve()

if not str(p).startswith(str(PROJECT_ROOT)):

raise ValueError("path out of project root")

return p

def tool_read_file(path: str) -> str:

p = safe_resolve(path)

if not p.exists() or not p.is_file():

return f"[read_file] not found: {path}"

if p.stat().st_size > MAX_FILE_BYTES:

return f"[read_file] file too large ({p.stat().st_size} bytes): {path}"

return p.read_text(encoding="utf-8", errors="replace")

def tool_glob(pattern: str) -> str:

# 简化:只允许相对路径模式

matches = sorted([str(p.relative_to(PROJECT_ROOT)) for p in PROJECT_ROOT.glob(pattern)])

return "\n".join(matches[:200]) or "[glob] no matches"

def tool_grep(pattern: str, path: str = ".") -> str:

base = safe_resolve(path)

files: List[Path] = []

if base.is_file():

files = [base]

else:

files = [p for p in base.rglob("*") if p.is_file()]

out: List[str] = []

rx = re.compile(pattern)

for f in files:

try:

text = f.read_text(encoding="utf-8", errors="replace")

except Exception:

continue

for i, line in enumerate(text.splitlines(), 1):

if rx.search(line):

rel = str(f.relative_to(PROJECT_ROOT))

out.append(f"{rel}:{i}:{line}")

if len(out) >= 200:

return "\n".join(out) + "\n...[TRUNCATED]..."

return "\n".join(out) or "[grep] no matches"

TOOLS = {

"read_file": tool_read_file,

"glob": tool_glob,

"grep": tool_grep,

}

def expand_at_paths(text: str) -> Tuple[str, List[str]]:

# 识别 @path(最小实现:以空格分隔)

tokens = text.split()

collected: List[str] = []

for t in tokens:

if t.startswith("@") and len(t) > 1:

path = t[1:]

collected.append(path)

if not collected:

return text, []

blocks = []

for p in collected:

content = tool_read_file(p)

blocks.append(f"\n\n[CONTEXT:{p}]\n{content}\n")

expanded = text + "\n" + "\n".join(blocks)

return expanded, collected

def build_tool_schemas() -> List[Dict[str, Any]]:

# OpenAI tool schema(示意)

return [

{

"type": "function",

"function": {

"name": "read_file",

"description": "Read a text file from project root (read-only).",

"parameters": {

"type": "object",

"properties": {"path": {"type": "string"}},

"required": ["path"],

},

},

},

{

"type": "function",

"function": {

"name": "glob",

"description": "List files by glob pattern under project root (read-only).",

"parameters": {

"type": "object",

"properties": {"pattern": {"type": "string"}},

"required": ["pattern"],

},

},

},

{

"type": "function",

"function": {

"name": "grep",

"description": "Search pattern in files under a path (read-only).",

"parameters": {

"type": "object",

"properties": {

"pattern": {"type": "string"},

"path": {"type": "string"},

},

"required": ["pattern"],

},

},

},

]

def run_tool_call(name: str, args: Dict[str, Any]) -> str:

if name not in TOOLS:

return f"[tool] unknown: {name}"

try:

if name == "read_file":

out = TOOLS[name](args["path"])

elif name == "glob":

out = TOOLS[name](args["pattern"])

elif name == "grep":

out = TOOLS[name](args["pattern"], args.get("path", "."))

else:

out = "[tool] not implemented"

return clamp_text(out)

except Exception as e:

return f"[tool] error: {e}"

def chat_loop(api_key: str, base_url: str, model: str) -> None:

if OpenAI is None:

raise RuntimeError("Please install openai python sdk: pip install openai")

client = OpenAI(api_key=api_key, base_url=base_url)

tools = build_tool_schemas()

messages: List[Dict[str, Any]] = []

print("Mini Agent CLI. Type /clear to reset, /exit to quit. Use @path to include files.")

while True:

user_in = input("\n> ").strip()

if not user_in:

continue

if user_in == "/exit":

break

if user_in == "/clear":

messages = []

print("[state] cleared")

continue

expanded, at_paths = expand_at_paths(user_in)

if at_paths:

print(f"[context] included: {', '.join(at_paths)}")

messages.append({"role": "user", "content": expanded})

# tool-calling loop

for _ in range(8): # 防止死循环

resp = client.chat.completions.create(

model=model,

messages=messages,

tools=tools,

)

msg = resp.choices[0].message

# tool call?

if getattr(msg, "tool_calls", None):

messages.append(msg)

for tc in msg.tool_calls:

name = tc.function.name

args = json.loads(tc.function.arguments or "{}")

out = run_tool_call(name, args)

messages.append(

{"role": "tool", "tool_call_id": tc.id, "content": out}

)

continue

# final

content = msg.content or ""

print("\n" + content)

messages.append({"role": "assistant", "content": content})

break

if __name__ == "__main__":

# 填上你的兼容配置即可运行

# api_key = os.getenv("OPENAI_API_KEY", "")

# base_url = os.getenv("OPENAI_BASE_URL", "https://api.openai.com/v1")

# model = os.getenv("OPENAI_MODEL", "gpt-4.1-mini")

# chat_loop(api_key, base_url, model)

print("This is a skeleton. Set OPENAI_API_KEY/OPENAI_BASE_URL/OPENAI_MODEL and call chat_loop().")

04|把它变得“更像真实产品”:四个你一定会补的工程点

- 输出裁剪策略:不要把超长日志原样回填,先截断/摘要

- 权限与白名单:默认只读;写操作需要确认点或白名单路径

- 可观测性:记录每次 tool call 的 name/args/耗时/输出长度/错误原因

- 失败恢复:重试上限、降级路径(例如从 grep 降级为 glob+read_file)

当这四件事补齐,你基本就能复刻大多数 AI coding 工具的“核心体验”。

05|系列导航

- 系列 01:从 Chat 到 Agent:4 个关键零件

- 系列 02:命令系统:从提示词模板到可扩展子命令

- 系列 03:@路径上下文:如何给材料而不喂爆上下文

- 系列 04:MCP 与工具闭环:注册、调用、回填与失败恢复

- 系列 05:上下文治理:清空/压缩/摘要与预算控制

- 系列 06:SubAgent:上下文隔离与模块化协作

- 系列 07:规约驱动:让交付可复现的 Spec 工作流

- 系列 08(本文):迷你 CLI:从伪代码到最小可运行骨架

参考与致谢

- 阿里云开发者技术号原文:《AI coding 智能体设计》

更多推荐

已为社区贡献11条内容

已为社区贡献11条内容

所有评论(0)