存储:解决数据持久化问题

本文介绍了Kubernetes中的持久存储管理机制,主要包括PersistentVolume(PV)、PersistentVolumeClaim(PVC)和StorageClass三个核心对象。PV表示持久存储设备,PVC代表Pod申请存储资源,StorageClass则负责分类存储系统并协调PVC与PV的绑定。文章详细演示了创建本地PV和PVC的过程,包括定义访问模式、容量等参数,并展示了如何将

一、PersistentVolume 对象

1.PersistentVolume

- Kubernetes 顺着 Volume 的概念,延伸出了 PersistentVolume 对象,它专门用来表示持久存储设备,但隐藏了存储的底层实现,我们只需要知道它能安全可靠地保管数据就可以了

- PV 实际上是一些存储设备、文件系统,比如 Ceph、GlusterFS、MFS、NFS,或是本地磁盘

- PV 属于集群的系统资源,是和 Node 平级的一种对象,Pod 对它没有管理权,只有使用权

注:这么多种存储设备,只用一个 PV 对象来管理还是有点太勉强了,不符合“单一职责”的原则,于是 Kubernetes 就又增加了两个新对象,PersistentVolumeClaim 和 StorageClass

2.PersistentVolumeClaim

- 用来向 Kubernetes 申请存储资源的

- PVC 是给 Pod 使用的对象,它相当于是 Pod 的代理,代表 Pod 向系统申请 PV

- 一旦资源申请成功,Kubernetes 就会把 PV 和 PVC 关联在一起,这个动作叫做“绑定”(bound)

- 找到:bound 绑定;找不到:pending

[root@localhost 10-ingress]# kubectl api-resources | grep pv

persistentvolumeclaims pvc v1 true PersistentVolumeClaim

persistentvolumes pv v1 false PersistentVolume

注:系统里的存储资源非常多,如果要 PVC 去直接遍历查找合适的 PV 也很麻烦,所以就要用到 StorageClass

3.StorageClass

- 抽象了特定类型的存储系统(比如 Ceph、NFS),把不同pv做了一个分类,在 PVC 和 PV 之间充当“协调人”的角色,帮助 PVC 找到合适的 PV

- 它可以简化 Pod 挂载“虚拟盘”的过程,让 Pod 看不到 PV 的实现细节

[root@localhost 10-ingress]# kubectl api-resources | grep -i StorageClass

storageclasses sc storage.k8s.io/v1 false StorageClass

二、创建PV存储

1.创建 PV YAML模版

[root@localhost 12-storage]# cat host-10m-pv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: host-10m-pv

spec:

storageClassName: host-test # 存储 分类

accessModes: # 访问模式

- ReadWriteOnce # 读写一次,只允许一个 Pod 挂载

capacity:

storage: 10Mi # 容量

hostPath: # 宿主机上的一个目录

path: /data/host-10m-pv/

[root@localhost 12-storage]# kubectl apply -f host-10m-pv.yaml

persistentvolume/host-10m-pv created

[root@localhost 12-storage]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS VOLUMEATTRIBUTESCLASS REASON AGE

host-10m-pv 10Mi RWO Retain Available host-test <unset> 20sRECLAIM POLICY(回收策略):Retain(保留),即删除pv后,不删除pv对应的目录

accessModes定义了存储设备的访问模式:

- ReadWriteOnce:存储卷可读可写,但只能被一个节点上的 Pod 挂载 本机存储

- ReadOnlyMany:存储卷只读不可写,可以被任意节点上的 Pod 多次挂载

- ReadWriteMany:存储卷可读可写,也可以被任意节点上的 Pod 多次挂载 网络存储

2.创建PVC YAML模版

[root@localhost 12-storage]# cat host-10m-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: host-10m-pvc

spec:

storageClassName: host-test # 从 host-test 类 找合适的 PV

accessModes: # 访问模式

- ReadWriteOnce

resources:

requests:

storage: 10Mi # PVC 声明 需要多大存储 ,找 pv>=10M

[root@localhost 12-storage]# kubectl apply -f host-10m-pvc.yaml

persistentvolumeclaim/host-10m-pvc created

[root@localhost 12-storage]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

host-10m-pvc Bound host-10m-pv 10Mi RWO host-test <unset> 8s

注:绑定之后,这个pv就不能跟别的pvc绑定了

3.挂载 PersistentVolume

[root@localhost 12-storage]# cat nginx-dep.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-dep

name: nginx-dep

spec:

replicas: 1

selector:

matchLabels:

app: nginx-dep

template:

metadata:

labels:

app: nginx-dep

spec:

volumes:

- name: host-pvc-vol

persistentVolumeClaim:

claimName: host-10m-pvc

containers:

- image: registry.cn-beijing.aliyuncs.com/xxhf/nginx:1.22.1

name: nginx

imagePullPolicy: IfNotPresent

volumeMounts:

- name: host-pvc-vol # 卷的名字

mountPath: /tmp # 挂载点

[root@localhost 12-storage]# kubectl apply -f nginx-dep.yaml

deployment.apps/nginx-dep configured

[root@localhost 12-storage]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-dep-5c4fc7b8dc-c9btx 1/1 Running 0 19s 172.17.171.89 worker <none> <none>

测试文件写入:

# 在worker节点查看

[root@worker local]# cd /data/

[root@worker data]# ll

总用量 0

drwxr-xr-x 2 root root 6 11月 22 11:06 host-10m-pv

# 写入文件

[root@worker data]# cd host-10m-pv/

[root@worker host-10m-pv]# touch file-in-worker

# 容器内查看(也可以反过来验证)

[root@localhost 12-storage]# kubectl exec -it nginx-dep-5c4fc7b8dc-c9btx -- sh

# cd /tmp

# pwd

/tmp

# ls

file-in-worker

缺点:不会自动做数据同步,通过切换网络存储可以解决这个问题

[root@localhost 12-storage]# kubectl scale deployment nginx-dep --replicas 2

deployment.apps/nginx-dep scaled

[root@localhost 12-storage]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-dep-5c4fc7b8dc-c9btx 1/1 Running 0 11m 172.17.171.89 worker <none> <none>

nginx-dep-5c4fc7b8dc-hjh6n 1/1 Running 0 7s 172.17.189.79 worker2 <none> <none>

# 在worker2节点查看

[root@worker2 host-10m-pv]# pwd

/data/host-10m-pv

[root@worker2 host-10m-pv]# ls

[root@worker2 host-10m-pv]#

4.使用网络存储

注:要想让存储卷真正能被 Pod 任意挂载,我们需要变更存储的方式为网络存储,这样 Pod 无论在哪里运行,只要知道 IP 地址或者域名,就可以通过网络通信访问存储设备

(1)安装 NFS 服务器

[root@worker2 ~]# dnf install -y rpcbind nfs-utils # 每个worker节点都要安装

[root@worker2 ~]# mkdir /data/nfs

[root@worker2 ~]# echo "/data/nfs 192.168.5.0/24(rw,sync,no_subtree_check,no_root_squash,insecure)" >> /etc/exports

[root@worker2 ~]# systemctl enable --now rpcbind

[root@worker2 ~]# systemctl enable --now nfs-server

[root@worker ~]# dnf -y install rpcbind nfs-utils

[root@worker ~]# showmount -e 192.168.5.130

Export list for 192.168.5.130:

/data/nfs 192.168.5.0/24

[root@worker2 ~]# mount -t nfs 192.168.5.130:/data/nfs /data/nfs(2)使用 NFS 存储卷

[root@localhost nfs-static]# cat nfs-static-pv.yml

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs-5g-pv

spec:

storageClassName: nfs

accessModes:

- ReadWriteMany

capacity:

storage: 5Gi

nfs:

path: /data/nfs

server: 192.168.5.130 # nfs server address

[root@localhost nfs-static]# kubectl apply -f nfs-static-pv.yml

persistentvolume/nfs-5g-pv created

[root@localhost nfs-static]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS VOLUMEATTRIBUTESCLASS REASON AGE 52m

nfs-5g-pv 5Gi RWX Retain Available nfs <unset> 4s

(3)绑定pv

[root@localhost nfs-static]# cat nfs-static-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nfs-static-pvc

spec:

storageClassName: nfs

accessModes:

- ReadWriteMany

resources:

requests:

storage: 5Gi

[root@localhost nfs-static]# kubectl apply -f nfs-static-pvc.yaml

persistentvolumeclaim/nfs-static-pvc created

[root@localhost nfs-static]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

nfs-static-pvc Bound nfs-5g-pv 5Gi RWX nfs <unset> 7s

(4)把 PVC 挂载到 Pod

[root@localhost nfs-static]# cat nginx-dep.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-dep

name: nginx-dep

spec:

replicas: 1

selector:

matchLabels:

app: nginx-dep

template:

metadata:

labels:

app: nginx-dep

spec:

volumes:

- name: nfs-pvc-vol

persistentVolumeClaim:

claimName: nfs-static-pvc

containers:

- image: registry.cn-beijing.aliyuncs.com/xxhf/nginx:1.22.1

name: nginx

volumeMounts:

- name: nfs-pvc-vol

mountPath: /tmp

[root@localhost nfs-static]# kubectl apply -f nginx-dep.yaml

deployment.apps/nginx-dep configured

[root@localhost nfs-static]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-dep-6866f4c5f4-hfglf 1/1 Running 0 8s(5)测试文件写入

[root@worker nfs]# pwd

/data/nfs

[root@worker nfs]# touch nfs-file

[root@localhost nfs-static]# kubectl exec -it nginx-dep-6866f4c5f4-hfglf -- sh

# cd /tmp

# ls

nfs-file

# 启用多个副本

[root@localhost nfs-static]# kubectl scale deployment nginx-dep --replicas 4

# 这次查看worker2节点的pod

[root@localhost nfs-static]# kubectl exec -it nginx-dep-6866f4c5f4-qggl4 -- sh

# cd /tmp

# ls

nfs-file

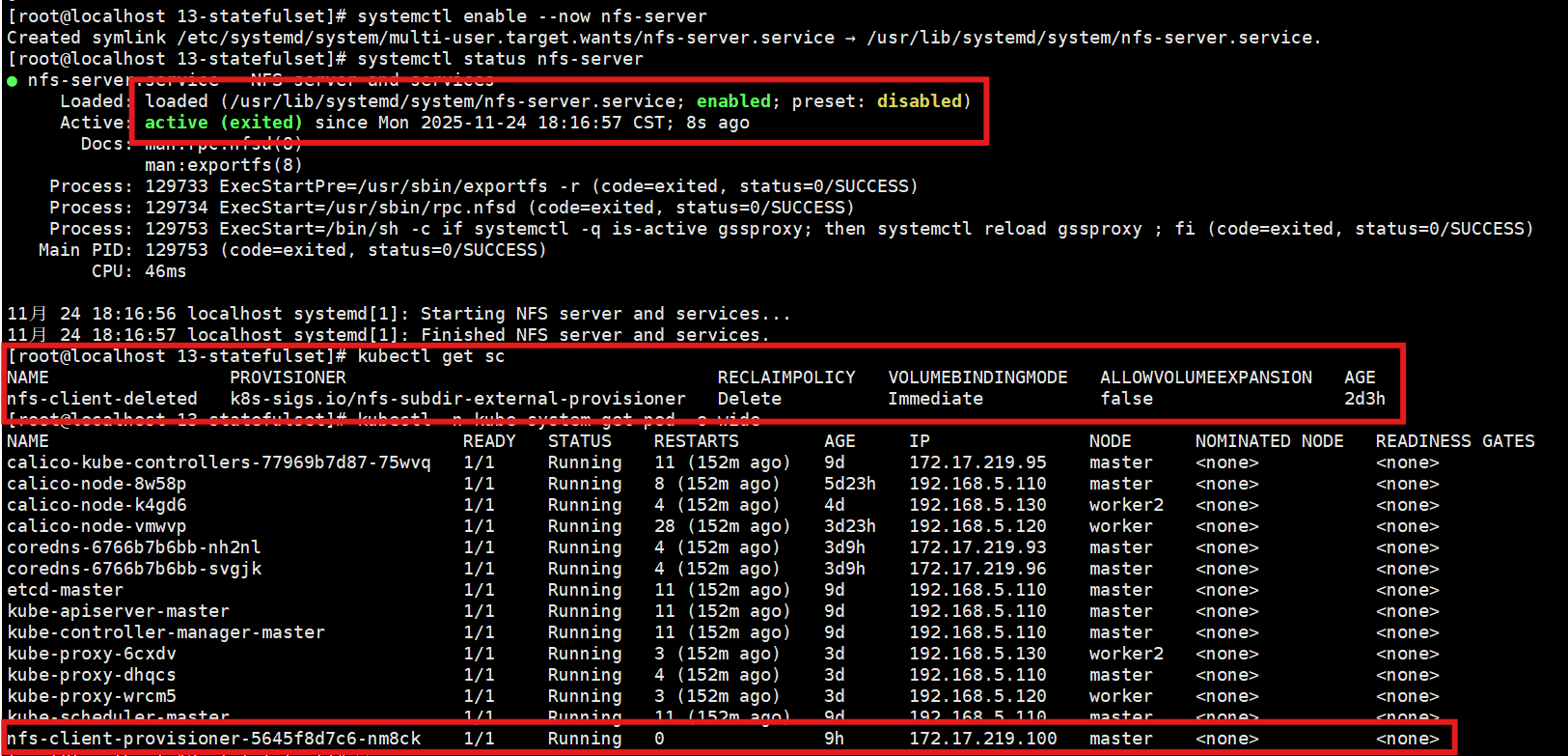

5.部署 NFS Provisioner

- 问题:PV 还是需要人工管理,必须要由系统管理员手动维护各种存储设备,再根据开发需求逐个创建 PV,而且 PV 的大小也很难精确控制,容易出现空间不足或者空间浪费的情况

- 解决方案:动态存储卷

- 可以用 StorageClass 绑定一个 Provisioner 对象,自动管理存储、创建 PV 的应用,代替了原来系统管理员的手工劳动,可以在 GitHub 上找到这个项目

(1)部署Provisioner 对象

部署 RBAC 权限:

[root@localhost nfs-provisioner]# cat 01-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: kube-system

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: kube-system

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: kube-system

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: kube-system

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: kube-system

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

[root@localhost nfs-provisioner]# kubectl apply -f 01-rbac.yaml

部署Provisioner 对象:

[root@localhost nfs-provisioner]# cat 02-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: kube-system

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-beijing.aliyuncs.com/xxhf/nfs-subdir-external-provisioner:v4.0.2

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: k8s-sigs.io/nfs-subdir-external-provisioner

- name: NFS_SERVER

value: 192.168.5.120 # nfs server address

- name: NFS_PATH

value: /data/nfs # nfs share directory

volumes:

- name: nfs-client-root

nfs:

server: 192.168.5.120

path: /data/nfs

[root@localhost nfs-provisioner]# kubectl apply -f 02-deployment.yaml

(2)使用 NFS 动态存储卷

[root@localhost nfs-provisioner]# cat nfs-client-deleted-sc.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-client-deleted

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner

parameters:

onDelete: "delete"

[root@localhost nfs-provisioner]# kubectl apply -f nfs-client-deleted-sc.yaml

storageclass.storage.k8s.io/nfs-client-deleted created

[root@localhost nfs-provisioner]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

nfs-client-deleted k8s-sigs.io/nfs-subdir-external-provisioner Delete Immediate false 10s

- onDelete: "retain" 暂时保留分配的存储,之后再手动删除

- onDelete: "delete"删除pvc后,分配的存储也一并删除

[root@localhost nfs-provisioner]# cat nfs-dynamic-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nfs-dynamic-pvc

spec:

storageClassName: nfs-client-deleted

accessModes:

- ReadWriteMany

resources:

requests:

storage: 10Mi

[root@localhost nfs-provisioner]# kubectl apply -f nfs-dynamic-pvc.yaml

persistentvolumeclaim/nfs-dynamic-pvc created

[root@localhost nfs-provisioner]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

host-10m-pvc Bound host-10m-pv 10Mi RWO host-test <unset> 4h7m

nfs-dynamic-pvc Bound pvc-8fdc6b55-e85f-4c24-8a2d-6a4ed2e2f1fc 10Mi RWX nfs-client-deleted <unset> 2s

nfs-static-pvc Bound nfs-5g-pv 5Gi RWX nfs <unset> 3h18m

[root@localhost nfs-provisioner]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS VOLUMEATTRIBUTESCLASS REASON AGE

host-10m-pv 10Mi RWO Retain Bound default/host-10m-pvc host-test <unset> 4h13m

nfs-5g-pv 5Gi RWX Retain Bound default/nfs-static-pvc nfs <unset> 3h21m

pvc-8fdc6b55-e85f-4c24-8a2d-6a4ed2e2f1fc 10Mi RWX Delete Bound default/nfs-dynamic-pvc nfs-client-deleted <unset> 9s

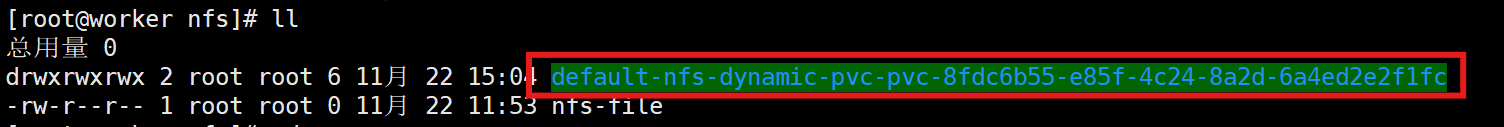

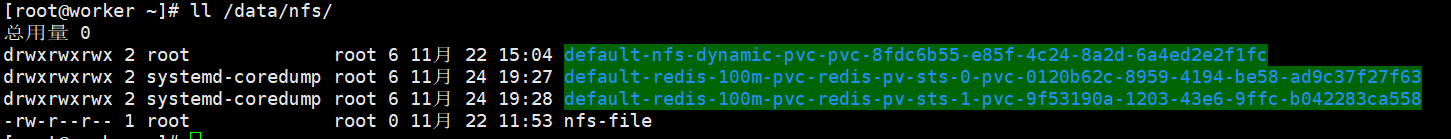

(3)查看worker节点

三、StatefulSet

1.有状态应用

- 运行状态信息很重要了,不能丢失

- 比如 Redis、MySQL 这样的数据库,它们的“状态”就是在内存或者磁盘上产生的数据,是应用的核心价值所在

- 多个实例之间可能存在依赖关系,比如 master/slave、active/passive,需要依次启动才能保证应用正常运行

- 外界的客户端也可能要使用固定的网络标识来访问实例

2.应用特点

有状态应用:

- 依赖关系

- 启动顺序

- 网络标识

无状态应用:

- 多个实例之间是无关的

- 启动的顺序不固定

- Pod 的名字、IP 地址、域名都是完全随机的

注:所以Kubernetes 就在 Deployment 的基础之上定义了一个新的 API 对象,就叫 StatefulSet,专门用来管理有状态的应用

[root@localhost ~]# kubectl api-resources | grep StatefulSet

statefulsets sts apps/v1 true StatefulSet

3.StatefulSet YAML模版

注:在创建有状态应用之前,需要率先创建好的三个属性:

[root@localhost ~]# kubectl explain StatefulSet.spec | grep required

selector <LabelSelector> -required-

serviceName <string> -required-

template <PodTemplateSpec> -required-

(1)创建SVC

[root@localhost 13-statefulset]# cat redis-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: redis-svc

spec:

selector:

app: redis-sts

ports:

- port: 6379

protocol: TCP

targetPort: 6379

[root@localhost 13-statefulset]# kubectl apply -f redis-svc.yaml

service/redis-svc created

(2)创建sts

[root@localhost 13-statefulset]# cat redis-sts.yaml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: redis-sts

spec:

serviceName: redis-svc # svc name 同之前创建的svc一致

replicas: 2

selector: # 标签选择器

matchLabels:

app: redis-sts

template: # pod 模板

metadata:

labels:

app: redis-sts

spec:

containers:

- image: registry.cn-beijing.aliyuncs.com/xxhf/redis:alpine

name: redis

ports:

- containerPort: 6379

[root@localhost 13-statefulset]# kubectl apply -f redis-sts.yaml

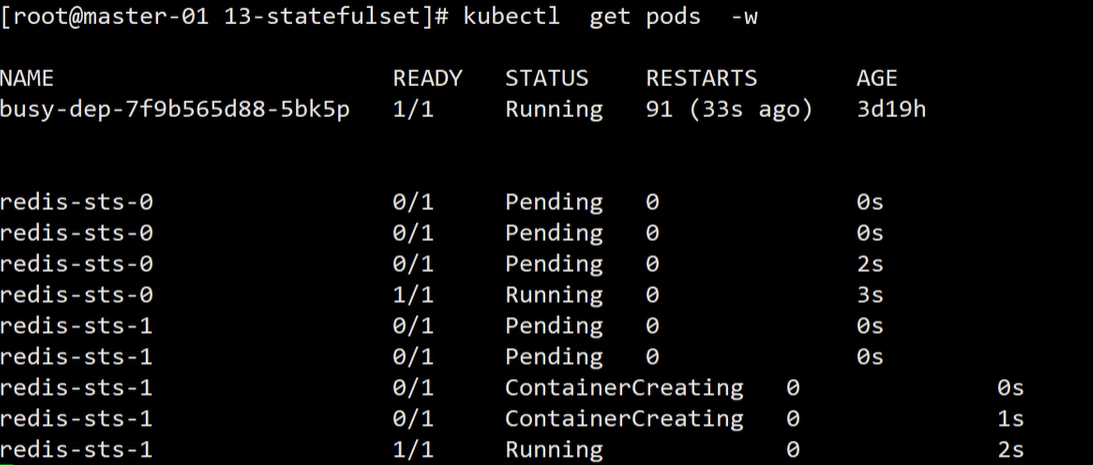

statefulset.apps/redis-sts created

注:这些创建出来的副本是有序号的;0必须启动成功之后才会启动1;副本数量配置文件可调;解决了有状态应用的启动顺序;删掉之后会出现跟之前一样的副本(解决了依赖关系)

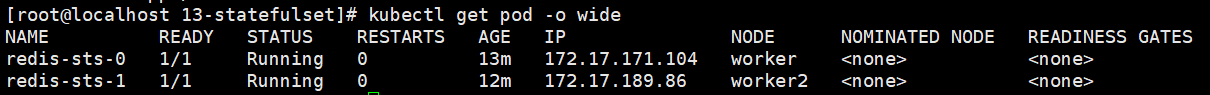

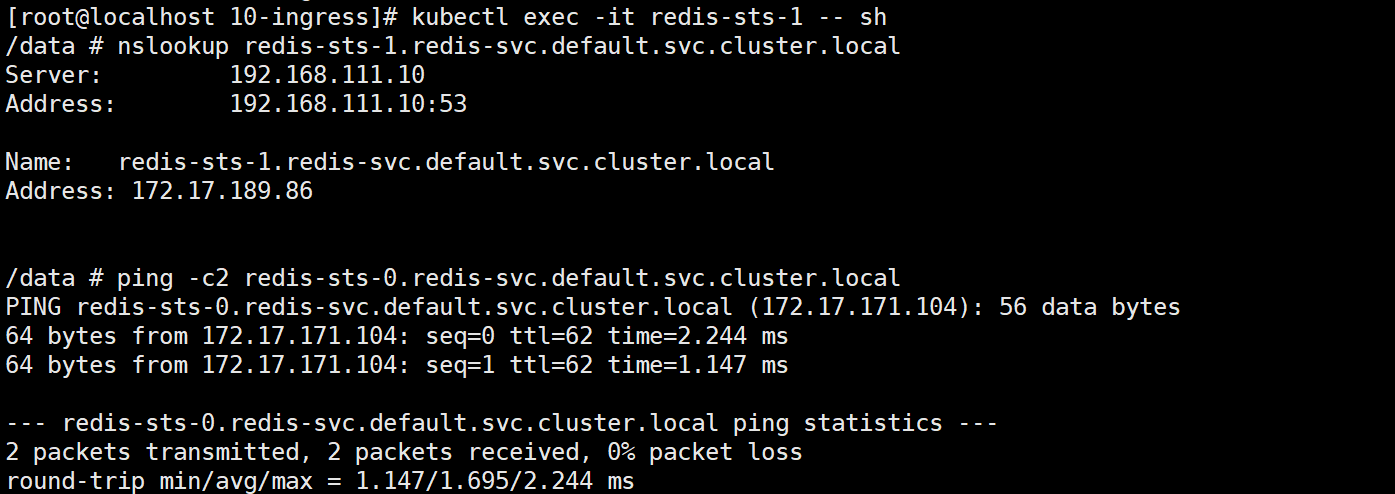

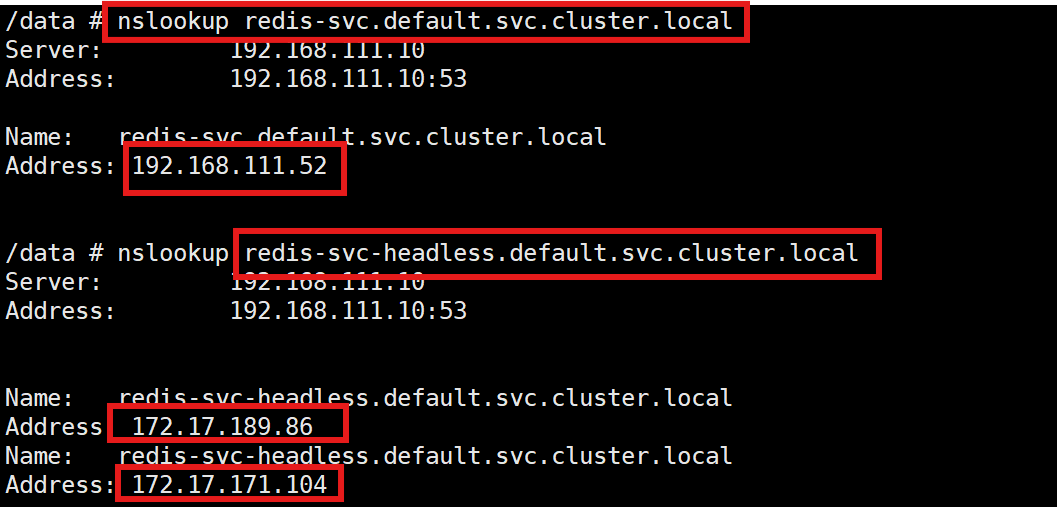

(3)网络标识

注:在 StatefulSet 的场景下,因为每个 Pod 也都拥有了固定的名称,所以每个 Pod 也可以分配一个固定的域名,格式是“Pod 名. 服务名. 命名空间.svc.cluster.local”

注:外部的客户端只要知道了 StatefulSet 对象,就可以用固定的编号去访问某个具体的实例了,虽然 Pod 的 IP 地址可能会变,但这个有编号的域名由 Service 对象维护,是稳定不变的

(4)无头SVC

注:一般我们在创建的时候会创建两个SVC,一个有头的,一个无头的(即没有cluster ip)

[root@localhost 13-statefulset]# cat redis-svc-headless.yaml

apiVersion: v1

kind: Service

metadata:

name: redis-svc-headless

spec:

clusterIP: None # 没有ip地址

selector:

app: redis-sts

ports:

- port: 6379

protocol: TCP

targetPort: 6379

[root@localhost 13-statefulset]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

redis-svc ClusterIP 192.168.111.52 <none> 6379/TCP 91m

redis-svc-headless ClusterIP None <none> 6379/TCP 14s

注:解析有头的域名时,返回的是集群的cluster ip;解析无头的域名时,返回的是所有pod的ip(主要看Client 是否支持 多服务端 IP 列表的 访问方式)

知识点补充:

Service 原本的目的是负载均衡,应该由它在 Pod 前面来转发流量,但是对 StatefulSet 来说,这项功能反而是不必要的,因为 Pod 已经有了稳定的域名,外界访问服务就不应该再通过 Service 这一层了。所以,从安全和节约系统资源的角度考虑,我们可以在 Service 里添加一个字段 clusterIP: None ,告诉 Kubernetes 不必再为这个对象分配 IP 地址。这种类型的 Service 对象也被称为 Headless Services (无头服务 )

四、StatefulSet 数据持久化

1.准备环境

[root@localhost nfs-provisioner]# cat nfs-client-retained-sc.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-client-retained

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner

parameters:

onDelete: "retain"

[root@localhost nfs-provisioner]# kubectl apply -f nfs-client-retained-sc.yaml

storageclass.storage.k8s.io/nfs-client-retained created

[root@localhost nfs-provisioner]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

nfs-client-deleted k8s-sigs.io/nfs-subdir-external-provisioner Delete Immediate false 2d4h

nfs-client-retained k8s-sigs.io/nfs-subdir-external-provisioner Delete Immediate false 7s

2.修改 YAML模版

[root@localhost 13-statefulset]# cat redis-pv-sts.yaml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: redis-pv-sts

spec:

serviceName: redis-pv-svc

volumeClaimTemplates: # PVC 模板

- metadata:

name: redis-100m-pvc

spec:

storageClassName: nfs-client-retained

accessModes:

- ReadWriteMany

resources:

requests:

storage: 100Mi

replicas: 2

selector:

matchLabels:

app: redis-pv-sts

template:

metadata:

labels:

app: redis-pv-sts

spec:

containers:

- image: redis:5-alpine

name: redis

ports:

- containerPort: 6379

volumeMounts:

- name: redis-100m-pvc

mountPath: /data

[root@localhost 13-statefulset]# kubectl apply -f redis-pv-sts.yaml

statefulset.apps/redis-pv-sts created

易错点:若存储的ip地址有误,如何修改?

[root@localhost 13-statefulset]# kubectl -n kube-system get deployments

NAME READY UP-TO-DATE AVAILABLE AGE

calico-kube-controllers 1/1 1 1 9d

coredns 2/2 2 2 9d

nfs-client-provisioner 1/1 1 1 2d5h

# 直接edit nfs-client-provisioner文件查看worker节点:

3.持久化测试

[root@localhost 13-statefulset]# kubectl exec -it redis-pv-sts-0 -- redis-cli

127.0.0.1:6379> set abc 123

OK

127.0.0.1:6379> set xyz 789

OK

127.0.0.1:6379> quit

现在模拟意外事故,删除这个 Pod:

[root@localhost 13-statefulset]# kubectl delete pod redis-pv-sts-0

pod "redis-pv-sts-0" deleted

由于 StatefulSet 和 Deployment 一样会监控 Pod 的实例,发现 Pod 数量少了就会很快创建出新的 Pod,并且名字、网络标识也都会和之前的 Pod 一模一样:

[root@localhost 13-statefulset]# kubectl get pod

NAME READY STATUS RESTARTS AGE

redis-pv-sts-0 1/1 Running 0 25s

redis-pv-sts-1 1/1 Running 0 14m再用 Redis 客户端登录去检查一下数据:

[root@localhost 13-statefulset]# kubectl exec -it redis-pv-sts-0 -- redis-cli

127.0.0.1:6379> keys *

1) "xyz"

2) "abc"

因为我们把 NFS 网络存储挂载到了 Pod 的 /data 目录,Redis 就会定期把数据落盘保存,所以新创建的 Pod 再次挂载目录的时候会从备份文件里恢复数据,内存里的数据就恢复原状了

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)