AI开发框架与工具:构建智能应用的技术基石

研究导向项目:优选PyTorch,灵活性和易用性更佳生产环境部署:TensorFlow在企业级应用中更成熟快速原型开发:Hugging Face生态系统是首选云端服务集成:根据现有基础设施选择对应云服务商。

·

🛠️ AI开发框架与工具:构建智能应用的技术基石

🚀 引言:在AI技术快速发展的今天,选择合适的开发框架和工具链已成为决定项目成败的关键因素。本文将深入解析主流AI开发框架的技术特性、云端服务生态以及MLOps最佳实践,为开发者提供全方位的技术选型指南。

🐍 PyTorch vs TensorFlow:框架选择的技术决策

🔥 PyTorch:动态图的灵活之选

核心优势:

- 动态计算图:即时执行模式,调试友好

- Pythonic设计:符合Python开发者直觉

- 研究友好:快速原型开发和实验迭代

- 强大生态:torchvision、torchaudio等丰富扩展

实战代码示例:

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import DataLoader, TensorDataset

class ModernCNN(nn.Module):

def __init__(self, num_classes=10):

super(ModernCNN, self).__init__()

# 特征提取层

self.features = nn.Sequential(

# 第一个卷积块

nn.Conv2d(3, 64, kernel_size=3, padding=1),

nn.BatchNorm2d(64),

nn.ReLU(inplace=True),

nn.MaxPool2d(2, 2),

# 第二个卷积块

nn.Conv2d(64, 128, kernel_size=3, padding=1),

nn.BatchNorm2d(128),

nn.ReLU(inplace=True),

nn.MaxPool2d(2, 2),

# 第三个卷积块

nn.Conv2d(128, 256, kernel_size=3, padding=1),

nn.BatchNorm2d(256),

nn.ReLU(inplace=True),

nn.AdaptiveAvgPool2d((1, 1))

)

# 分类器

self.classifier = nn.Sequential(

nn.Dropout(0.5),

nn.Linear(256, 128),

nn.ReLU(inplace=True),

nn.Dropout(0.3),

nn.Linear(128, num_classes)

)

def forward(self, x):

x = self.features(x)

x = torch.flatten(x, 1)

x = self.classifier(x)

return x

# 训练流程

def train_model(model, train_loader, criterion, optimizer, device):

model.train()

running_loss = 0.0

correct = 0

total = 0

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

# 前向传播

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

# 反向传播

loss.backward()

optimizer.step()

# 统计

running_loss += loss.item()

_, predicted = output.max(1)

total += target.size(0)

correct += predicted.eq(target).sum().item()

if batch_idx % 100 == 0:

print(f'Batch {batch_idx}, Loss: {loss.item():.4f}, '

f'Acc: {100.*correct/total:.2f}%')

return running_loss / len(train_loader), 100. * correct / total

🏗️ TensorFlow:生产级的稳定选择

核心优势:

- 静态计算图:高效的生产部署

- TensorBoard:强大的可视化工具

- TensorFlow Serving:企业级模型服务

- 跨平台支持:移动端、Web端全覆盖

技术架构对比:

| 特性维度 | PyTorch | TensorFlow |

|---|---|---|

| 🔧 开发模式 | 动态图,即时执行 | 静态图,延迟执行 |

| 🚀 学习曲线 | 较平缓,Pythonic | 较陡峭,概念抽象 |

| 🔬 研究适用性 | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| 🏭 生产部署 | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| 📱 移动端支持 | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| 🌐 社区生态 | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

# TensorFlow 2.x 现代化开发示例

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

class TensorFlowCNN(keras.Model):

def __init__(self, num_classes=10):

super(TensorFlowCNN, self).__init__()

self.conv_block1 = keras.Sequential([

layers.Conv2D(64, 3, padding='same', activation='relu'),

layers.BatchNormalization(),

layers.MaxPooling2D(2)

])

self.conv_block2 = keras.Sequential([

layers.Conv2D(128, 3, padding='same', activation='relu'),

layers.BatchNormalization(),

layers.MaxPooling2D(2)

])

self.conv_block3 = keras.Sequential([

layers.Conv2D(256, 3, padding='same', activation='relu'),

layers.BatchNormalization(),

layers.GlobalAveragePooling2D()

])

self.classifier = keras.Sequential([

layers.Dropout(0.5),

layers.Dense(128, activation='relu'),

layers.Dropout(0.3),

layers.Dense(num_classes, activation='softmax')

])

def call(self, inputs, training=None):

x = self.conv_block1(inputs)

x = self.conv_block2(x)

x = self.conv_block3(x)

return self.classifier(x, training=training)

# 使用tf.function装饰器优化性能

@tf.function

def train_step(model, optimizer, x, y):

with tf.GradientTape() as tape:

predictions = model(x, training=True)

loss = keras.losses.sparse_categorical_crossentropy(y, predictions)

loss = tf.reduce_mean(loss)

gradients = tape.gradient(loss, model.trainable_variables)

optimizer.apply_gradients(zip(gradients, model.trainable_variables))

return loss, predictions

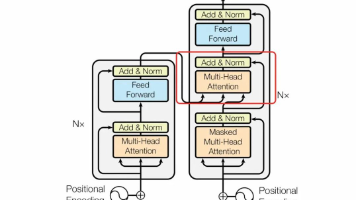

🤗 Hugging Face生态系统:预训练模型的宝库

🌟 Transformers库:模型调用的标准化接口

核心价值:

- 统一API:一套接口调用所有主流模型

- 预训练模型库:数万个开源模型资源

- 多任务支持:NLP、CV、Audio全覆盖

- 生产就绪:优化的推理性能

graph LR

A[Hugging Face Hub] --> B[🤖 LLM模型]

A --> C[👁️ 视觉模型]

A --> D[🎵 音频模型]

A --> E[🔄 多模态模型]

B --> F[GPT系列]

B --> G[BERT系列]

B --> H[T5系列]

C --> I[ViT]

C --> J[CLIP]

C --> K[DETR]

style A fill:#ff6b35

style B fill:#4ecdc4

style C fill:#45b7d1

style D fill:#96ceb4

style E fill:#feca57

实战应用示例:

from transformers import (

AutoTokenizer, AutoModel, AutoModelForSequenceClassification,

pipeline, Trainer, TrainingArguments

)

import torch

from datasets import Dataset

class HuggingFaceNLPPipeline:

def __init__(self, model_name="bert-base-chinese"):

self.model_name = model_name

self.tokenizer = AutoTokenizer.from_pretrained(model_name)

self.model = AutoModel.from_pretrained(model_name)

def create_classification_pipeline(self, num_labels=2):

"""创建文本分类流水线"""

model = AutoModelForSequenceClassification.from_pretrained(

self.model_name,

num_labels=num_labels

)

return pipeline(

"text-classification",

model=model,

tokenizer=self.tokenizer,

device=0 if torch.cuda.is_available() else -1

)

def fine_tune_model(self, train_texts, train_labels, eval_texts, eval_labels):

"""模型微调"""

# 数据预处理

def tokenize_function(examples):

return self.tokenizer(

examples['text'],

truncation=True,

padding=True,

max_length=512

)

# 创建数据集

train_dataset = Dataset.from_dict({

'text': train_texts,

'labels': train_labels

}).map(tokenize_function, batched=True)

eval_dataset = Dataset.from_dict({

'text': eval_texts,

'labels': eval_labels

}).map(tokenize_function, batched=True)

# 模型配置

model = AutoModelForSequenceClassification.from_pretrained(

self.model_name,

num_labels=len(set(train_labels))

)

# 训练参数

training_args = TrainingArguments(

output_dir='./results',

num_train_epochs=3,

per_device_train_batch_size=16,

per_device_eval_batch_size=64,

warmup_steps=500,

weight_decay=0.01,

logging_dir='./logs',

evaluation_strategy="epoch",

save_strategy="epoch",

load_best_model_at_end=True,

)

# 训练器

trainer = Trainer(

model=model,

args=training_args,

train_dataset=train_dataset,

eval_dataset=eval_dataset,

tokenizer=self.tokenizer,

)

# 开始训练

trainer.train()

return trainer

# 使用示例

nlp_pipeline = HuggingFaceNLPPipeline()

# 快速推理

classifier = nlp_pipeline.create_classification_pipeline()

results = classifier(["这个产品质量很好", "服务态度太差了"])

print(results)

🚀 Datasets库:数据处理的高效工具

from datasets import load_dataset, DatasetDict

from transformers import AutoTokenizer

class DatasetProcessor:

def __init__(self, tokenizer_name="bert-base-chinese"):

self.tokenizer = AutoTokenizer.from_pretrained(tokenizer_name)

def process_text_classification_data(self, dataset_name):

"""处理文本分类数据集"""

# 加载数据集

dataset = load_dataset(dataset_name)

# 数据预处理函数

def preprocess_function(examples):

return self.tokenizer(

examples['text'],

truncation=True,

padding=True,

max_length=512

)

# 批量处理

tokenized_dataset = dataset.map(

preprocess_function,

batched=True,

remove_columns=dataset['train'].column_names

)

return tokenized_dataset

def create_custom_dataset(self, texts, labels):

"""创建自定义数据集"""

dataset = Dataset.from_dict({

'text': texts,

'labels': labels

})

return dataset.map(

lambda x: self.tokenizer(

x['text'],

truncation=True,

padding=True,

max_length=512

),

batched=True

)

☁️ 云端AI服务:AWS、Azure、Google Cloud AI

🌩️ 云服务生态对比分析

🔧 AWS SageMaker:端到端ML平台

核心功能:

- SageMaker Studio:集成开发环境

- SageMaker Training:分布式训练服务

- SageMaker Endpoints:模型部署与推理

- SageMaker Pipelines:ML工作流编排

import boto3

import sagemaker

from sagemaker.pytorch import PyTorch

from sagemaker.inputs import TrainingInput

class SageMakerMLPipeline:

def __init__(self, role, bucket_name):

self.sagemaker_session = sagemaker.Session()

self.role = role

self.bucket = bucket_name

self.region = boto3.Session().region_name

def create_training_job(self, entry_point, source_dir,

train_data_path, val_data_path):

"""创建训练任务"""

# 配置PyTorch估算器

pytorch_estimator = PyTorch(

entry_point=entry_point,

source_dir=source_dir,

role=self.role,

instance_type='ml.p3.2xlarge',

instance_count=1,

framework_version='1.12.0',

py_version='py38',

hyperparameters={

'epochs': 10,

'batch_size': 32,

'learning_rate': 0.001

}

)

# 配置数据输入

train_input = TrainingInput(

s3_data=train_data_path,

content_type='application/json'

)

val_input = TrainingInput(

s3_data=val_data_path,

content_type='application/json'

)

# 启动训练

pytorch_estimator.fit({

'train': train_input,

'validation': val_input

})

return pytorch_estimator

def deploy_model(self, estimator, endpoint_name):

"""部署模型到端点"""

predictor = estimator.deploy(

initial_instance_count=1,

instance_type='ml.m5.large',

endpoint_name=endpoint_name

)

return predictor

def create_batch_transform(self, model_name, input_path, output_path):

"""批量推理"""

transformer = sagemaker.transformer.Transformer(

model_name=model_name,

instance_count=1,

instance_type='ml.m5.large',

output_path=output_path

)

transformer.transform(

data=input_path,

content_type='application/json',

split_type='Line'

)

return transformer

🧠 Azure OpenAI Service:企业级GPT服务

import openai

from azure.identity import DefaultAzureCredential

from azure.keyvault.secrets import SecretClient

class AzureOpenAIService:

def __init__(self, endpoint, api_version="2023-12-01-preview"):

self.endpoint = endpoint

self.api_version = api_version

self.client = openai.AzureOpenAI(

azure_endpoint=endpoint,

api_version=api_version,

api_key=self._get_api_key()

)

def _get_api_key(self):

"""从Azure Key Vault获取API密钥"""

credential = DefaultAzureCredential()

vault_url = "https://your-keyvault.vault.azure.net/"

client = SecretClient(vault_url=vault_url, credential=credential)

return client.get_secret("openai-api-key").value

def chat_completion(self, messages, model="gpt-4", temperature=0.7):

"""聊天完成"""

response = self.client.chat.completions.create(

model=model,

messages=messages,

temperature=temperature,

max_tokens=1000

)

return response.choices[0].message.content

def text_embedding(self, text, model="text-embedding-ada-002"):

"""文本嵌入"""

response = self.client.embeddings.create(

model=model,

input=text

)

return response.data[0].embedding

def batch_processing(self, texts, batch_size=10):

"""批量处理"""

results = []

for i in range(0, len(texts), batch_size):

batch = texts[i:i+batch_size]

batch_results = []

for text in batch:

result = self.chat_completion([

{"role": "user", "content": text}

])

batch_results.append(result)

results.extend(batch_results)

return results

🔧 MLOps:模型部署与生产环境管理

🚀 MLOps核心理念与实践

MLOps生命周期:

🐳 Docker化部署方案

# app.py - Flask API服务

from flask import Flask, request, jsonify

import torch

import pickle

import numpy as np

from transformers import AutoTokenizer, AutoModelForSequenceClassification

app = Flask(__name__)

class ModelService:

def __init__(self):

self.model = None

self.tokenizer = None

self.load_model()

def load_model(self):

"""加载预训练模型"""

model_path = "/app/models/sentiment_model"

self.tokenizer = AutoTokenizer.from_pretrained(model_path)

self.model = AutoModelForSequenceClassification.from_pretrained(model_path)

self.model.eval()

def predict(self, text):

"""预测函数"""

inputs = self.tokenizer(

text,

return_tensors="pt",

truncation=True,

padding=True,

max_length=512

)

with torch.no_grad():

outputs = self.model(**inputs)

predictions = torch.nn.functional.softmax(outputs.logits, dim=-1)

confidence = torch.max(predictions).item()

predicted_class = torch.argmax(predictions).item()

return {

"prediction": predicted_class,

"confidence": confidence,

"probabilities": predictions.tolist()[0]

}

# 初始化模型服务

model_service = ModelService()

@app.route('/health', methods=['GET'])

def health_check():

return jsonify({"status": "healthy", "version": "1.0.0"})

@app.route('/predict', methods=['POST'])

def predict():

try:

data = request.get_json()

text = data.get('text', '')

if not text:

return jsonify({"error": "No text provided"}), 400

result = model_service.predict(text)

return jsonify(result)

except Exception as e:

return jsonify({"error": str(e)}), 500

@app.route('/batch_predict', methods=['POST'])

def batch_predict():

try:

data = request.get_json()

texts = data.get('texts', [])

if not texts:

return jsonify({"error": "No texts provided"}), 400

results = []

for text in texts:

result = model_service.predict(text)

results.append(result)

return jsonify({"results": results})

except Exception as e:

return jsonify({"error": str(e)}), 500

if __name__ == '__main__':

app.run(host='0.0.0.0', port=8080, debug=False)

Dockerfile配置:

# Dockerfile

FROM python:3.9-slim

# 设置工作目录

WORKDIR /app

# 安装系统依赖

RUN apt-get update && apt-get install -y \

gcc \

g++ \

&& rm -rf /var/lib/apt/lists/*

# 复制依赖文件

COPY requirements.txt .

# 安装Python依赖

RUN pip install --no-cache-dir -r requirements.txt

# 复制应用代码

COPY . .

# 创建模型目录

RUN mkdir -p /app/models

# 暴露端口

EXPOSE 8080

# 健康检查

HEALTHCHECK --interval=30s --timeout=30s --start-period=5s --retries=3 \

CMD curl -f http://localhost:8080/health || exit 1

# 启动命令

CMD ["gunicorn", "--bind", "0.0.0.0:8080", "--workers", "4", "--timeout", "120", "app:app"]

📊 Kubernetes部署与监控

# deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: ml-model-service

labels:

app: ml-model

spec:

replicas: 3

selector:

matchLabels:

app: ml-model

template:

metadata:

labels:

app: ml-model

spec:

containers:

- name: ml-model

image: your-registry/ml-model:latest

ports:

- containerPort: 8080

env:

- name: MODEL_PATH

value: "/app/models"

resources:

requests:

memory: "1Gi"

cpu: "500m"

limits:

memory: "2Gi"

cpu: "1000m"

livenessProbe:

httpGet:

path: /health

port: 8080

initialDelaySeconds: 30

periodSeconds: 10

readinessProbe:

httpGet:

path: /health

port: 8080

initialDelaySeconds: 5

periodSeconds: 5

---

apiVersion: v1

kind: Service

metadata:

name: ml-model-service

spec:

selector:

app: ml-model

ports:

- protocol: TCP

port: 80

targetPort: 8080

type: LoadBalancer

📈 模型监控与性能追踪

import prometheus_client

from prometheus_client import Counter, Histogram, Gauge

import time

import logging

class ModelMonitoring:

def __init__(self):

# Prometheus指标

self.prediction_counter = Counter(

'model_predictions_total',

'Total number of predictions made',

['model_version', 'status']

)

self.prediction_latency = Histogram(

'model_prediction_duration_seconds',

'Time spent on predictions',

['model_version']

)

self.model_accuracy = Gauge(

'model_accuracy_score',

'Current model accuracy',

['model_version']

)

self.error_rate = Gauge(

'model_error_rate',

'Current error rate',

['model_version']

)

def record_prediction(self, model_version, status, latency):

"""记录预测指标"""

self.prediction_counter.labels(

model_version=model_version,

status=status

).inc()

self.prediction_latency.labels(

model_version=model_version

).observe(latency)

def update_model_metrics(self, model_version, accuracy, error_rate):

"""更新模型性能指标"""

self.model_accuracy.labels(model_version=model_version).set(accuracy)

self.error_rate.labels(model_version=model_version).set(error_rate)

def log_prediction_details(self, input_text, prediction, confidence):

"""记录预测详情"""

logging.info(f"Prediction made: {prediction}, Confidence: {confidence:.4f}")

# 检测异常情况

if confidence < 0.5:

logging.warning(f"Low confidence prediction: {confidence:.4f}")

if len(input_text) > 1000:

logging.warning(f"Long input text: {len(input_text)} characters")

# 集成监控的预测服务

class MonitoredModelService(ModelService):

def __init__(self):

super().__init__()

self.monitoring = ModelMonitoring()

self.model_version = "v1.0.0"

def predict(self, text):

start_time = time.time()

try:

result = super().predict(text)

latency = time.time() - start_time

# 记录成功预测

self.monitoring.record_prediction(

self.model_version,

'success',

latency

)

# 记录预测详情

self.monitoring.log_prediction_details(

text,

result['prediction'],

result['confidence']

)

return result

except Exception as e:

latency = time.time() - start_time

# 记录失败预测

self.monitoring.record_prediction(

self.model_version,

'error',

latency

)

logging.error(f"Prediction error: {str(e)}")

raise

🔮 未来发展趋势与技术展望

🚀 新兴技术趋势

- 🧠 神经架构搜索(NAS):自动化模型设计

- ⚡ 模型压缩与加速:边缘计算友好的轻量化模型

- 🔄 联邦学习:隐私保护的分布式训练

- 🎯 AutoML 2.0:端到端自动化机器学习

- 🌐 多模态融合:视觉、语言、音频的统一建模

📊 技术成熟度评估

| 技术领域 | 成熟度 | 应用前景 | 技术挑战 |

|---|---|---|---|

| 🐍 深度学习框架 | ⭐⭐⭐⭐⭐ | 稳定发展 | 性能优化 |

| 🤗 预训练模型 | ⭐⭐⭐⭐⭐ | 快速增长 | 计算成本 |

| ☁️ 云端AI服务 | ⭐⭐⭐⭐ | 蓬勃发展 | 数据安全 |

| 🔧 MLOps平台 | ⭐⭐⭐⭐ | 快速成熟 | 标准化 |

| 🧠 AutoML | ⭐⭐⭐ | 潜力巨大 | 可解释性 |

| ⚡ 边缘AI | ⭐⭐⭐ | 新兴领域 | 硬件限制 |

💡 总结与建议

🎯 框架选择建议

- 研究导向项目:优选PyTorch,灵活性和易用性更佳

- 生产环境部署:TensorFlow在企业级应用中更成熟

- 快速原型开发:Hugging Face生态系统是首选

- 云端服务集成:根据现有基础设施选择对应云服务商

🚀 最佳实践原则

- 🔄 版本控制:代码、数据、模型的全生命周期管理

- 📊 监控体系:建立完善的性能监控和告警机制

- 🔒 安全合规:数据隐私保护和模型安全部署

- ⚡ 性能优化:持续优化推理速度和资源利用率

- 🧪 A/B测试:科学评估模型效果和业务价值

通过合理选择开发框架、充分利用云端服务、建立完善的MLOps流程,我们能够构建出高效、稳定、可扩展的AI应用系统,为业务创新提供强有力的技术支撑。

更多推荐

已为社区贡献4条内容

已为社区贡献4条内容

所有评论(0)